Large Language Models (LLMs) are advanced artificial intelligence systems that understand and generate human language. These models, such as GPT-4, are built on deep learning architectures and trained on vast datasets, enabling them to perform various tasks, including text completion, translation, summarization, and more. Their ability to generate coherent and contextually relevant text has made them invaluable in the healthcare, finance, customer service, and entertainment industries.

Importance of Adversarial Robustness

Adversarial robustness refers to the resilience of LLMs against malicious inputs designed to deceive or manipulate them. Such inputs can cause the model to produce incorrect or harmful outputs, posing significant risks in applications where accuracy and reliability are critical.

Examples of malicious inputs include subtly altered text meant to mislead the model into generating inappropriate responses or executing unintended actions. Ensuring adversarial robustness is crucial to maintaining the integrity and safety of LLM-driven systems, protecting both users and organizations from potential threats.

Understanding Adversarial Attacks

Types of Adversarial Attacks

Adversarial attacks on Large Language Models (LLMs) can be classified into three main types:

- White-box attacks: These attacks occur when the attacker has full access to the model, including its architecture, parameters, and training data. This knowledge allows the attacker to craft highly effective adversarial inputs by exploiting specific vulnerabilities within the model.

- Black-box attacks: In these attacks, the attacker has no direct access to the model and must rely on input-output pairs to infer the model’s behavior. By systematically querying the model and analyzing its responses, the attacker can generate adversarial inputs that cause the model to produce incorrect or harmful outputs.

- Gray-box attacks: These attacks fall between white-box and black-box attacks. In gray-box attacks, the attacker has limited knowledge of the model, such as its architecture, but not its parameters. This partial information enables the attacker to craft adversarial inputs with moderate effectiveness.

Mechanisms of Adversarial Inputs

Adversarial inputs are crafted using various techniques to deceive LLMs:

- Perturbation-based attacks: These involve making minor, often imperceptible modifications to the input data, such as altering a few words or characters in a text, which can lead to considerable shifts in the model’s output.

- Input modification techniques: These include more sophisticated strategies, such as paraphrasing, introducing grammatical errors, or using synonyms and homophones to manipulate the model’s understanding and response.

Vulnerabilities in LLMs

Inherent Weaknesses in LLMs

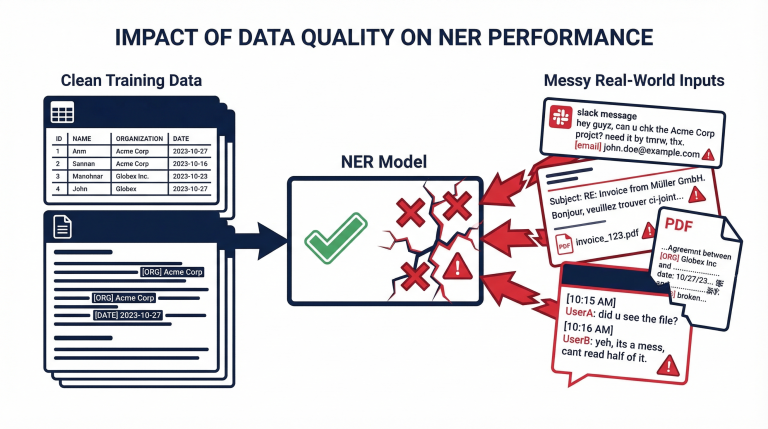

Large Language Models (LLMs) have inherent vulnerabilities due to their design and training processes. One primary weakness is their sensitivity to input modifications. Small perturbations or changes in the input data can cause disproportionate shifts in the model’s output, leading to potential exploitation by adversaries. Another significant issue is overfitting, where the model performs remarkably well on training data but struggles with generalization to unseen data. This over-reliance on specific patterns within the training set makes LLMs susceptible to carefully crafted adversarial inputs.

Common Exploits and Their Mechanisms

Adversaries often exploit these weaknesses through token manipulation and contextual vulnerabilities. Token manipulation involves altering individual words or characters in the input text to mislead the model. For instance, slight spelling errors or synonyms can create adversarial examples that bypass the model’s defenses. Contextual vulnerabilities arise when the model’s understanding of the input context is exploited, leading to incorrect or harmful outputs. These exploits can be leveraged to manipulate the model’s behavior, compromising reliability and security.

By understanding these vulnerabilities, developers can better anticipate and mitigate potential threats, enhancing the robustness of LLMs against adversarial attacks.

Defense Mechanisms Against Adversarial Inputs

Adversarial Training

Adversarial training is a robust defense mechanism where models are trained using adversarial examples alongside regular data. This method helps the model learn to recognize and mitigate malicious inputs. The concept involves generating adversarial examples during training and incorporating them into the learning process. While adversarial training significantly enhances the model’s resilience to known attacks, its limitations include increased computational costs and the potential to overfit to specific types of adversarial inputs.

Robust Optimization Techniques

Robust optimization techniques aim to improve the model’s resistance to adversarial attacks through various strategies. Regularization methods, such as L2 regularization, add a penalty to the model’s complexity, encouraging simpler and more generalizable models that are less susceptible to adversarial perturbations. Gradient masking involves hiding the gradient information used to craft adversarial examples, making it harder for attackers to generate effective perturbations. These techniques can enhance robustness but may also introduce challenges, such as reduced model accuracy and the need for fine-tuning to balance robustness and performance.

Input Sanitization

Input sanitization involves preprocessing inputs to remove potential adversarial perturbations before they reach the model. This can include techniques such as noise reduction, input normalization, and feature squeezing, which reduce the complexity of the input data. Real-time input filtering can dynamically assess and cleanse incoming data to prevent malicious inputs from affecting the model. These methods help protect the model from various adversarial attacks, though they may not be foolproof against sophisticated adversarial techniques.

Detection and Mitigation Tools

Detection and mitigation tools are crucial in identifying and responding to adversarial attacks. Anomaly detection systems use statistical and machine learning methods to identify inputs that deviate significantly from the norm, flagging them as potential adversarial threats. The use of AI and ML in threat detection involves training models specifically designed to detect adversarial inputs, enabling real-time defense mechanisms. These tools enrich the overall security posture of LLMs, though they require continuous updates and monitoring to remain effective against evolving adversarial techniques.

Evaluating Adversarial Robustness

Benchmarking Robustness

Evaluating the robustness of LLMs against adversarial attacks involves using standardized benchmarks and datasets. Standard benchmarks like GLUE and SuperGLUE provide a range of tasks to test model performance under normal conditions and adversarial scenarios. Metrics inherent to robustness, such as accuracy, precision, recall, and F1 score, measure the model’s ability to withstand adversarial inputs without significant degradation in performance. These benchmarks help assess the effectiveness of various defense mechanisms implemented in LLMs.

Robustness Testing Frameworks

Robustness testing frameworks are essential for simulating adversarial environments and assessing the resilience of LLMs. Simulation environments like AdvBench allow researchers to create controlled adversarial scenarios to evaluate model performance. Real-world testing scenarios involve deploying LLMs in live environments to observe their responses to naturally occurring adversarial inputs. These frameworks help identify vulnerabilities and areas for improvement, ensuring that LLMs are robust and reliable in practical applications.

Evaluating adversarial robustness is a continuous process that requires regular updates and testing to keep pace with evolving tactics. By employing comprehensive benchmarking and robust testing frameworks, developers can enhance the resilience of LLMs against malicious inputs.

Advances in Research and Future Directions

Innovative Approaches in Adversarial Defense

Recent advances in adversarial defense have introduced hybrid strategies that combine multiple techniques to enhance robustness. For instance, integrating adversarial training with robust optimization methods provides a more comprehensive defense mechanism. Additionally, the use of generative models for creating adversarial examples helps in preemptively identifying potential vulnerabilities. These generative models simulate a wide range of possible attacks, allowing developers to fortify their models against diverse adversarial tactics.

Ongoing Research and Development

Cutting-edge studies continue to explore new dimensions of adversarial robustness. Research collaborations between academia and industry are pivotal in this regard. These partnerships facilitate the blossoming of innovative solutions, such as adaptive defense mechanisms that dynamically respond to evolving threats. Furthermore, advancements in explainable AI enhance our understanding of how adversarial attacks exploit model weaknesses, leading to more effective defense strategies.

Future Challenges and Opportunities

As adversarial attacks become increasingly sophisticated, anticipating new threats is crucial. Future research must focus on developing cross-disciplinary approaches that integrate insights from cybersecurity, machine learning, and cognitive science. This interdisciplinary effort can lead to more resilient LLMs capable of withstanding novel adversarial techniques. The evolving regulatory landscape also presents opportunities for establishing standardized guidelines and best practices, ensuring robust defenses across the AI industry.

In summary, continuous innovation and cross-disciplinary collaboration are essential for advancing adversarial robustness in LLMs, safeguarding against future malicious inputs.

Final Thoughts

The Necessity of Continuous Improvement

Adversarial robustness in LLMs is paramount for conserving the integrity and reliability of AI systems. Continuous improvement is necessary as attackers constantly develop new techniques to exploit vulnerabilities. We can ensure that LLMs remain resilient against adversarial inputs by staying ahead of potential threats.

Call to Action for Stakeholders in AI and Cybersecurity

Stakeholders in AI and cybersecurity must collaborate to enhance the robustness of LLMs. This includes researchers, developers, and policymakers working together to develop and implement effective defense mechanisms. Regularly updating security protocols, conducting thorough testing, and sharing knowledge about emerging threats are essential steps in this collaborative effort.

The Utility of Protecto for Organizations

Organizations seeking to protect their AI systems from adversarial attacks can benefit from solutions like Protecto. Protecto helps organizations safeguard their LLMs against malicious inputs by providing advanced threat detection and mitigation tools. Implementing robust security measures ensures that AI-driven applications remain secure and trustworthy, maintaining user confidence and operational efficiency.

Ensuring the adversarial robustness of LLMs is a dynamic and ongoing challenge that requires a concerted effort from all stakeholders. By prioritizing continuous improvement and leveraging advanced solutions, we can defend against malicious inputs and secure the future of AI technology.