Large language models (LLMs) have transformed various industries by enabling advanced natural language processing, understanding, and generation capabilities. From virtual assistants and chatbots to automated content creation and translation services, securing LLM applications is now integral to business operations and customer interactions. However, as adoption grows, so do security risks, necessitating robust LLM application security strategies to safeguard these powerful AI systems.

Rising Security Concerns and Threat Landscape

As the adoption of LLMs grows, so do the associated security concerns. The increasing complexity and scale of LLMs make them prime targets for cyber threats, including data poisoning, adversarial manipulations, and privacy breaches. These security risks threaten the integrity, confidentiality, and reliability of secure LLM deployments.

Addressing these risks requires organizations to adopt LLM security best practices and implement strong LLM security architecture to prevent cyber threats from exploiting vulnerabilities. This approach will ensure that LLM-powered applications remain safe and trustworthy, providing a roadmap for users and organizations to steer the intricate world of cybersecurity.

Understanding Security Challenges in LLM-Powered Applications

Types of Security Threats

Data Poisoning Attacks: These attacks involve injecting malicious data into the training dataset. By manipulating the training data, attackers can cause the model to learn incorrect or harmful patterns, leading to degraded performance or biased outputs. Data poisoning can severely compromise the reliability and integrity of the language model.

Ensuring LLM data protection through careful curation and verification of training data is critical to mitigating this threat.

Adversarial Attacks: In adversarial attacks, attackers create specially crafted inputs to deceive the model into making incorrect predictions. These inputs can be subtle and imperceptible to humans, yet they can cause significant errors in the model’s outputs. Adversarial attacks highlight the model’s vulnerabilities to seemingly benign but carefully manipulated data.

Implementing LLM security tools such as adversarial training enhances resilience against these attacks.

Model Inversion Attacks: These attacks aim to reconstruct sensitive information from the model’s outputs, leading to significant LLM privacy concerns. By querying the model and analyzing its responses, attackers can infer private details about the training data, leading to potential privacy breaches. Model inversion attacks exploit the model’s ability to retain information about the data it was trained on. Encryption techniques and differential privacy can help protect user data.

Privacy Leakage: Privacy leakage occurs when the model inadvertently exposes sensitive information in the training data. This can happen through direct output or combining multiple outputs to infer private details. Ensuring the confidentiality of training data is crucial to preventing privacy leakage.

Unintentional exposure of private information within an LLM’s responses remains a pressing issue. Businesses must enforce stringent LLM DLP (Data Loss Prevention) mechanisms to prevent unauthorized data extraction.

Vulnerabilities in Large Language Models

Overfitting and Memorization Risks

Overfitting leads to LLMs memorizing sensitive information, heightening LLM privacy concerns and the risk of unintended data exposure. This can lead to models inadvertently storing and reproducing sensitive information, such as personal details or proprietary data. Overfitting reduces the model’s ability to generalize from new data and increases the risk of privacy breaches when the model is queried.

Inadequate Input Validation

Many LLMs lack robust input validation mechanisms, making them vulnerable to malicious inputs designed to exploit weaknesses. Harmful or misleading data can be introduced into the model without stringent validation, leading to erroneous outputs or security breaches. Secure input handling and real-time LLM anomaly detection systems are essential for threat mitigation.

Model Interpretability Issues

LLMs function as complex “black boxes,” making it difficult to trace decision-making processes. This lack of interpretability poses a significant security risk because it hampers the ability to detect and mitigate adversarial manipulations or biases embedded in the model.

Enhancing the transparency and interpretability of these models is indispensable for pinpointing and managing potential security vulnerabilities. Improving model transparency is key to detecting adversarial manipulations and securing sensitive outputs.

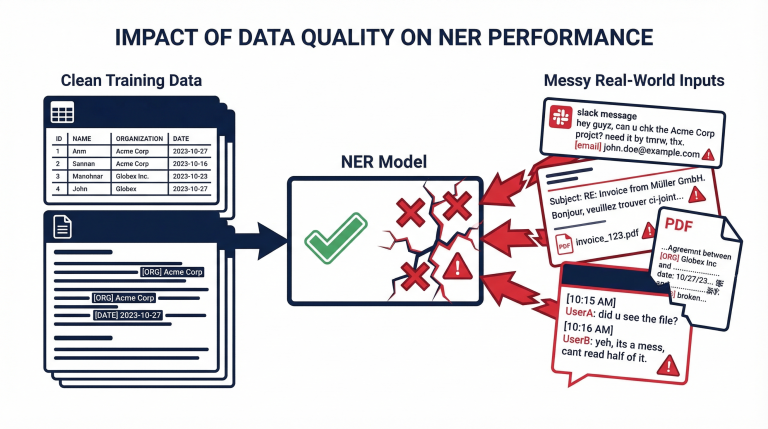

Dependency on Training Data Quality

The effectiveness of secure LLM applications relies on high-quality, unbiased training data. Poor-quality or biased data can introduce vulnerabilities that adversaries might exploit. Ensuring the integrity and diversity of training datasets is crucial for building robust models. Constant monitoring and updating of training data are required to sustain the security and reliability of LLMs.

Organizations must continuously assess datasets to maintain model integrity.

Best Practices for Securing LLM-Powered Applications

Data Preprocessing and Sanitization

Ensuring the security of LLM-powered applications begins with rigorous data preprocessing and sanitization. Clean data is vital to prevent malicious inputs from compromising the model. Techniques such as removing duplicates, correcting errors, and filtering out harmful or irrelevant content are essential. Additionally, employing automated tools for data sanitization can help identify and mitigate potential threats embedded within the dataset. Regular updates to the sanitization process, based on emerging threat patterns, further enhance data integrity.

Employing automated LLM security tools to identify and eliminate malicious content enhances model robustness.

Implementing Robust Adversarial Training

Adversarial training is a crucial technique for bolstering the resilience of LLMs against adversarial attacks. This involves exposing the model to adversarial examples during training to help it learn how to recognize and defend against such inputs. The model can develop a more robust understanding of potential threats by simulating various attack scenarios. By simulating cyber-attacks and training LLMs to recognize adversarial inputs, businesses can fortify their models against exploitation.

Regular Security Audits and Penetration Testing

Regular security audits and penetration testing are paramount for determining and handling vulnerabilities in LLM-powered applications. Security audits involve thoroughly examining the system’s architecture, codebase, and data handling practices to uncover potential weaknesses. Penetration testing, on the other hand, simulates cyber-attacks to evaluate the system’s defenses. Both practices should be conducted periodically and after significant updates to ensure continuous protection against evolving threats.

Routine audits and penetration testing expose vulnerabilities in LLM security architecture before they can be exploited by malicious actors.

Employing Encryption and Secure Data Transmission

Encryption and secure data transmission are fundamental to protecting sensitive information in LLM applications. Encrypting data at rest and in transit using advanced security protocols safeguards LLM data protection and prevents unauthorized access. Utilizing robust encryption protocols, such as AES-256 for data storage and TLS for data transmission, provides a strong defense against interception and tampering. Additionally, implementing secure essential management practices is crucial to maintaining encrypted data’s integrity.

Ensuring Compliance with Security Standards

Adhering to industry and regulatory security standards is critical for maintaining the security of LLM-powered applications. Adhering to regulatory frameworks such as ISO/IEC 27001, NIST, and GDPR reinforces LLM protection and enhances trust with stakeholders. Compliance with these standards ensures legal and ethical data handling and builds trust with users and stakeholders. Regular audits and updates to security practices in line with these standards are necessary to keep pace with regulatory changes and emerging threats.

Advanced Security Techniques

Anomaly Detection and Response Systems

LLM anomaly detection systems are crucial in securing LLM-powered applications by identifying unusual patterns or behavior and identifying potential security threats in real time. These utilize machine learning algorithms to analyze large volumes of data and detect anomalies that may indicate security threats or malicious activities. By continuously monitoring metrics such as user behavior, system logs, and network traffic, anomaly detection systems can effectively detect and mitigate security breaches in real time.

Differential Privacy Techniques

Differential privacy techniques provide a strong privacy guarantee by ensuring that individual data points cannot be distinguished in the output of a computation. Thus, differential privacy can protect sensitive information while allowing valuable insights derived from the data. In the context of LLM-powered applications, differential privacy can be applied to training data and model outputs to prevent the leakage of sensitive information. Techniques such as randomized response and noise injection can achieve differential privacy while minimizing the impact on model performance.

By incorporating differential privacy, organizations can prevent sensitive data from being reverse-engineered while maintaining model accuracy.

Federated Learning for Distributed Security

Federated learning enables training machine learning models across multiple decentralized devices or servers while keeping data localized, thereby addressing privacy concerns and reducing the risk of data breaches. In the context of LLM-powered applications, federated learning can enhance security by training models on data distributed across different locations without centralizing sensitive knowledge.

Federated learning decentralizes LLM training, reducing the risk of data exposure and bolstering LLM security best practices.

Zero-Trust Architecture in LLM Deployments

Zero-trust architecture adopts the “never trust, always verify” principle and assumes that threats may exist inside and outside the network perimeter. In LLM-powered applications, implementing a zero-trust architecture involves ascertaining the identity and trustworthiness of users, devices, and applications before awarding access to sensitive resources. This method helps contain unauthorized access and reduces the likelihood of security breaches by implementing strict access controls, continuous monitoring, and least privilege principles throughout the application infrastructure.

A zero-trust approach enforces strict access controls, ensuring only authorized users interact with secure LLM systems.

Role of Human Oversight in Enhancing Security

Continuous Monitoring and Human-in-the-Loop Systems

Continuous monitoring is paramount in detecting and responding to security threats in LLM-powered applications. Human-in-the-loop systems combine automated monitoring with human oversight, allowing for real-time analysis of system behavior and identification of anomalies. This approach enables rapid response to emerging threats and ensures that security measures remain effective in dynamic environments. Human analysts should oversee LLM security tools, ensuring nuanced threats are detected and mitigated promptly.

Training and Awareness Programs for Developers and Users

Educating developers and end-users about LLM security best practices is crucial for mitigating risks in LLM-powered applications. Developers should obtain training on secure coding practices, threat modeling, and secure deployment strategies to build resilient systems from the ground up. Similarly, users should be educated about potential security threats, such as phishing incursions or data breaches, and instructed on securely interacting with LLM applications. By facilitating a culture of security awareness, organizations can empower developers and users to contribute to the overall security of LLM-powered applications.

Incident Response and Management

Despite proactive security measures, security incidents may still occur in LLM-powered applications. Establishing robust incident response and management protocols is indispensable for minimizing the impact of security breaches. Organizations should have clear procedures for reporting, investigating, and mitigating security incidents, including communication plans for notifying stakeholders and relevant authorities. Regular drills and simulations can help teams prepare for potential security incidents and ensure a coordinated response when needed. Organizations can effectively manage security incidents and maintain trust in their LLM-powered applications by prioritizing incident response readiness.

Organizations must implement structured response plans to mitigate security incidents, ensuring rapid containment and resolution.

Final Thoughts

Securing LLM-powered applications demands a holistic approach integrating LLM security architecture, advanced detection techniques, and proactive monitoring. Implementing LLM security best practices, such as data sanitization, adversarial training, encryption, and zero-trust frameworks, significantly enhances model resilience against emerging threats.

Protecto offers comprehensive LLM security tools designed to safeguard secure LLM applications by mitigating risks and ensuring compliance. By leveraging cutting-edge LLM protection solutions, organizations can confidently deploy AI-driven applications while maintaining robust security and user trust in an increasingly AI-powered world.