AI data governance is the structured framework that ensures sensitive data remains protected when artificial intelligence systems are used.

An AI data governance framework defines how organizations discover, classify, protect, monitor, and audit data across AI systems. Unlike traditional governance, data governance for AI must work across prompts, responses, APIs, retrieval workflows, AI agents, and model outputs in real time.

Traditional data governance focuses on data at rest. It manages databases, access controls, storage policies, and compliance documentation.

AI fundamentally changes the environment, and hence, understanding AI data and privacy is crucial.

When organizations use large language models, AI agents, or retrieval-based systems, data flows dynamically. Information moves through prompts, responses, APIs, and multi-step workflows. Sensitive data can appear inside user queries, documents, or contextual inputs in real time.

That is where data governance for AI becomes different.

AI data governance operates at runtime. It monitors, detects, and protects sensitive information before it reaches external models or leaves a jurisdiction. It ensures compliance with regulations such as DPDP, GDPR, HIPAA, and PDPL while enabling AI systems to operate accurately.

For enterprises, AI-enabled data governance is no longer only about documentation. It is about enforcing privacy, security, access control, masking, and auditability inside every AI interaction.

Without proper AI data governance, organizations face three major risks:

- Data leakage across borders.

- Exposure of PII, PHI, or financial records in prompts.

- Loss of audit visibility across AI workflows.

Why Traditional DLP Tools Fail in AI Environments

A recent Stanford AI Index report showed that 78% of companies used AI in at least one business function in 2024. The year before, that number was 55%. Here are some more AI data privacy statistics and trends.

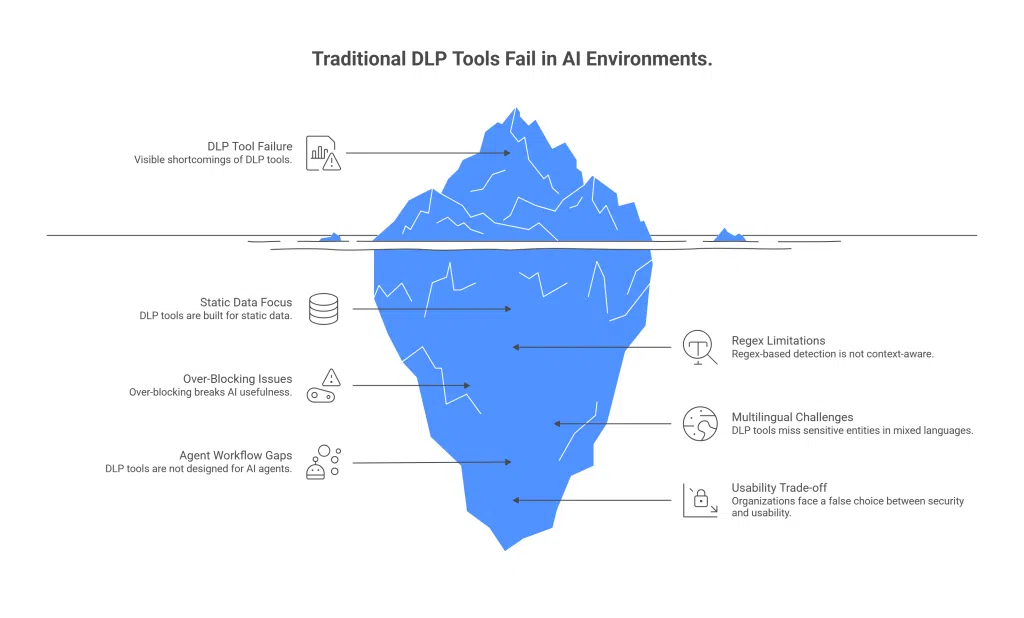

Traditional DLP tools were built for email servers, file transfers, and static databases. AI workflows are very different. Here’s where the gap appears:

Built for static data, not dynamic prompts: DLP systems scan stored files or outgoing emails. AI systems process real-time prompts, responses, agent chains, and RAG pipelines. Static inspection models cannot handle this flow.

Regex-based detection is not context-aware: Most legacy DLP tools rely on patterns and predefined rules. They struggle to detect sensitive data hidden in unstructured, conversational, or ambiguous text.

Over-blocking breaks AI usefulness: When DLP redacts entire sections of prompts, it removes context. LLMs then generate incomplete or inaccurate responses.

Under-protection in multilingual environments: AI inputs often contain mixed languages, typos, abbreviations, or malformed text. Traditional tools miss sensitive entities in such cases.

Not designed for AI agents or RAG workflows: Modern AI systems involve multi-step reasoning and data retrieval. DLP tools are not built to track sensitive data across these chained interactions.

Security vs usability trade-off: Organizations often face a false choice: strict blocking that reduces AI accuracy, or relaxed controls that increase compliance risk.

That is why modern enterprises need an AI contextual governance solution that can understand the data, user role, workflow context, jurisdiction, and risk level before deciding what should be allowed, masked, blocked, or logged.

Core Components of an AI Data Governance Framework

India’s AI governance approach is not about restricting AI. It is about enabling innovation while reducing risk. The Ministry of Electronics and Information Technology released the India AI Governance Guidelines in November 2025 to create a balanced, future-ready framework.

Here are the core components that define India’s AI data governance direction.

1. Trust as the Foundation

Trust is not optional. The guidelines clearly state that without trust, AI adoption will stagnate.

In practical terms, this means AI systems must be transparent, accountable, and secure. Organizations must build systems that users, regulators, and institutions can rely on. Trust must exist across the entire AI value chain, from developers to deployers.

2. People-First Governance

AI systems must be human-centric. This includes human oversight, ethical safeguards, and empowerment. AI should support citizens, not replace accountability. In data governance terms, this translates to consent management, explainability, grievance redressal mechanisms, and protection of vulnerable groups.

3. Balanced and Pro-Innovation Regulation

India does not aim to regulate the underlying AI technology itself. Instead, it focuses on governing AI applications through sectoral regulators.

Existing laws, such as the IT Act and the Digital Personal Data Protection Act, can already address many AI-related risks. Targeted amendments may be introduced only where gaps exist. This avoids overregulation while still ensuring accountability.

4. Infrastructure and Data Access

A strong AI data governance framework requires access to quality datasets and compute resources.

The guidelines recommend expanding access to high-quality, representative datasets, enabling data-sharing frameworks, and improving access to compute resources. Digital Public Infrastructure can also be integrated with AI to ensure scalable, inclusive adoption. Without structured data governance, safe AI development is not possible.

5. Risk Assessment and Mitigation

AI systems introduce risks such as malicious use, bias, transparency failures, systemic risks, and national security threats.

India’s framework recommends developing an India-specific risk assessment model. It also supports incident reporting systems and accountability mechanisms to monitor harm.

6. Accountability Across the AI Value Chain

The guidelines emphasize graded liability based on function, risk level, and due diligence. Developers, deployers, and users cannot operate in ambiguity. Clear classification, responsibility allocation, and enforcement mechanisms are essential for effective AI data governance.

For AI agents and RAG workflows, accountability should also include who accessed which data, which model processed it, what was masked, and what was returned in the final response.

7. Institutional Oversight and Whole-of-Government Approach

AI governance requires coordination. The framework proposes institutional mechanisms such as an AI Governance Group and technical expert committees to support policy oversight. This ensures cross-sector collaboration and future readiness.

8. Transparency and Content Authentication

The guidelines also highlight the importance of content authentication, watermarking, and traceability to address risks like deepfakes. Transparency across the AI value chain improves regulatory clarity and public confidence.

9. Capacity Building and Awareness

Governance cannot work without education. The framework calls for training programs for government officials, regulators, and law enforcement, as well as public awareness initiatives. AI literacy strengthens compliance and responsible adoption.

10. Agile and Future-Ready Design

AI evolves rapidly. Governance must evolve with it. The guidelines recommend flexible, principle-based frameworks that allow periodic review, horizon scanning, and recalibration.

Step-by-Step Implementation Guide

Step 1: Map Your AI Data Flows

To implement data privacy in AI, start by identifying where AI is currently used across the organization. This includes chatbots, LLM APIs, copilots, RAG systems, and agent workflows. You cannot implement AI data governance unless you know exactly where sensitive data enters and exits your AI stack.

Step 2: Classify Sensitive Data

Define what qualifies as sensitive under DPDP and various AI data privacy regulations. This may include PII, financial data, health records, credentials, and proprietary business information. Clear classification ensures that AI data governance is risk-based, not generic.

Step 3: Define Jurisdiction Boundaries

Determine which data must remain within India and which AI systems operate outside national borders. Map cross-border exposure risks clearly. Sovereignty decisions must be built into your architecture, not handled as afterthoughts.

For organizations using global LLM APIs, data sovereignty for AI should be addressed before deployment, not after sensitive prompts begin moving across regions.

Step 4: Deploy AI-Native Detection Controls

Use semantic detection that understands context, not just patterns. AI workflows often contain multilingual text, typos, and unstructured inputs that traditional tools miss. Effective data governance in AI must operate in real time across dynamic prompts and agent chains.

Step 5: Implement Context-Preserving Masking

Replace sensitive entities with structured tokens that preserve format and meaning. The goal is to protect data without breaking AI reasoning. If masking destroys context, model outputs lose accuracy and business value. This is especially important for AI-enabled data governance because the system must protect sensitive values while still allowing the model to understand business context.

Here are the 6 key principles of AI and data protection.

Step 6: Create a Sovereign AI Processing Layer

Ensure sensitive data is masked before being sent to external LLMs. Only tokenized information should move outside your jurisdiction. Raw customer data must remain inside secure infrastructure at all times.

Step 7: Enable Full Auditability

Maintain detailed logs of detection, masking, and AI interactions. Implement role-based access controls and immutable audit trails. Compliance under India’s AI data governance framework requires traceability, not assumptions.

Step 8: Monitor and Refine Continuously

Track detection accuracy, false positives, and workflow changes. AI systems evolve quickly, and governance controls must keep pace. Regular monitoring ensures that AI for data management remains secure and compliant over time.

Case Study: Enabling Sovereign AI in Financial Services Without Compromising Accuracy

A leading financial institution wanted to adopt large language models across its operations. However, strict data sovereignty laws prevented customer and financial data from leaving the country. Using public LLM APIs directly would violate compliance requirements. Traditional DLP tools were tested but failed. They either over-blocked prompts and broke AI outputs or missed sensitive data in multilingual and malformed inputs.

The bank implemented Protecto inside its private cloud as a sovereign AI governance layer. Its semantic detection engine accurately identified PII and financial data, even in mixed Arabic-English text. Sensitive information was replaced with context-preserving masking, preserving AI reasoning. Global LLMs processed masked prompts while raw data stayed within the jurisdiction.

The results were measurable.

99% recall in detection, 96% precision, and 85% semantic similarity were maintained. The system went live in four weeks, enabling full compliance without limiting AI innovation.

Why Protecto Is an AI-Enabled Data Governance Solution for Privacy Protection

Unmatched Detection Accuracy: Protecto’s DeepSight engine delivers over 99% recall in identifying PII, PHI, PCI, and financial data, even in multilingual, malformed, or mixed-language text across 50+ languages.

Context-Preserving Masking: Unlike legacy DLP tools that over-block and break AI reasoning, Protecto uses intelligent tokenization that maintains data structure and semantic intent, retaining over 85% cosine similarity in AI outputs.

AI-Native Architecture: Built specifically for modern AI workflows, LLM prompts, multi-agent pipelines, and RAG systems, not retrofitted email security tools from a decade ago.

Regulatory Compliance Out of the Box: Protecto supports GDPR, HIPAA, DPDP, PDPL, and SAMA compliance with immutable audit logs, encrypted token storage, and role-based vault access.

Data Sovereignty by Design: Sensitive data never leaves your jurisdiction. Protecto’s local vault deployment keeps raw data on-premises or in your private cloud while still enabling global AI models.

Rapid Deployment: Go from pilot to production in under four weeks, significantly faster than generic tools (2–4 months) or DIY solutions (6–18 months).

Trusted by Enterprise Leaders: Trusted by Fortune 100 companies and regulated industries, including banking and healthcare, with proven results in high-stakes, real-world deployments.

FAQs on AI Data Governance Framework

What is an AI data governance framework?

An AI data governance framework is a structured approach for managing, protecting, monitoring, and auditing data used by AI systems. It covers prompts, responses, APIs, RAG pipelines, AI agents, sensitive data classification, masking, access control, compliance, and audit trails.

Why is data governance for AI different from traditional data governance?

Data governance for AI is different because AI systems process data dynamically during prompts, retrieval, agent actions, and model responses. Traditional governance focuses mainly on stored data, while AI data governance must protect sensitive information at runtime before it reaches a model or leaves an approved environment.

How is AI data governance different from model governance?

AI data governance focuses on protecting and controlling the data flowing into and out of AI systems. Model governance, on the other hand, deals with model performance, bias monitoring, validation, and lifecycle management.

Does AI data governance apply only to external LLM usage?

No. Governance is required even for internally hosted models. Sensitive data can leak across departments, logs, or agent workflows if proper controls are not implemented.

Can AI data governance impact model performance?

If implemented poorly, yes. Over-blocking or aggressive redaction can reduce output quality. Well-designed frameworks preserve semantic context while protecting sensitive data.

How often should AI governance controls be reviewed?

AI systems evolve rapidly. Governance controls should be reviewed regularly, especially when new models, integrations, or regulatory updates are introduced.