In 2009, the U.S. government passed the Health Information Technology for Economic and Clinical Health (HITECH) Act, aimed at accelerating the adoption of Electronic Health Records (EHRs). The goals were ambitious and well-intentioned:

- Digitize healthcare information

- Improve care coordination

- Reduce medical errors

The federal push worked. EHR adoption skyrocketed over the following decade. As recently as 2017, hospitals were increasing EHR adoption by over 14% annually due to HITECH incentives. By the early 2020s, an estimated 78% of office-based physicians and 96% of hospitals were using certified EHRs.

But the outcome wasn’t all progress where it mattered.

When Digital Tools Became Digital Burdens

While EHRs made health information more accessible and standardized, they also introduced an unintended consequence: a massive rise in administrative work.

Studies show that physicians often spend 50% or more of their time interacting with EHR systems documenting care, entering orders, reviewing results, and managing messages instead of interacting with patients.

In fact:

- For every one hour a doctor spends with patients, they may spend two additional hours on clerical work such as documentation, billing, and compliance reporting.

- EHR documentation is cited as a contributor to clinician burnout by more than 77% of surveyed healthcare leaders.

- Physicians frequently report that documentation requirements are moderately to highly excessive, significantly impacting workflow and job satisfaction.

Healthcare’s shift to digital records improved compliance and data sharing, but it also shifted clinicians’ time away from patient care toward screens and paperwork.

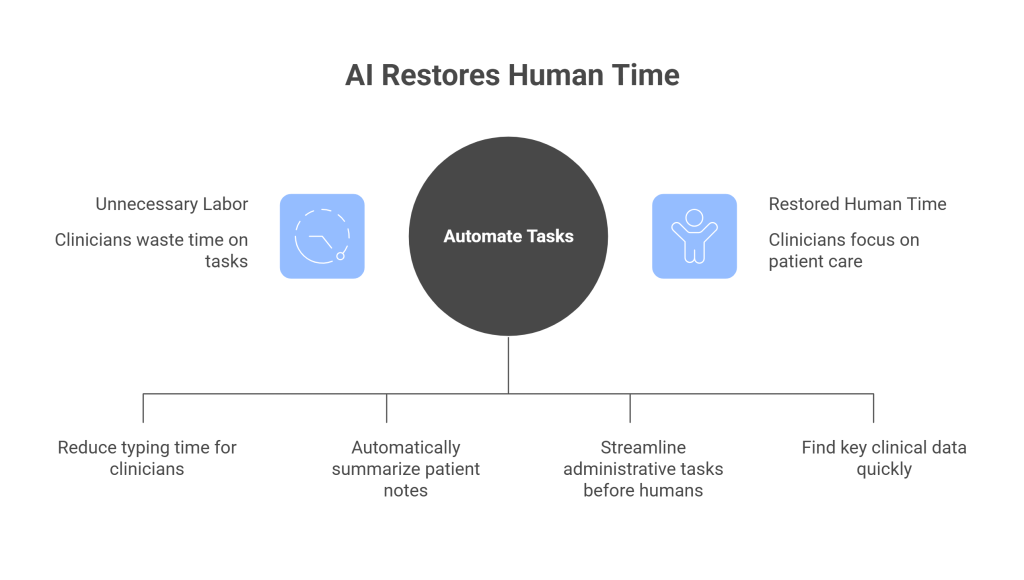

The Real Opportunity for AI: Restoring Human Time

AI isn’t a buzzword. It’s a second chance to fix what broke when technology optimized for compliance rather than care.

The opportunity is not to cut workforce, but to eliminate unnecessary labor:

- Automate documentation so clinicians aren’t typing notes for hours every day

- Summarize patient records and visit notes automatically

- Pre-review claims and administrative tasks before they reach a human

- Surface key clinical insights from fragmented data without manual searching

And there are early signs this works:

- AI and automation technologies are projected to save up to 200,000 clinical hours per day by streamlining record workflows and reducing manual charting.

- In a 2025 survey, 68% of physicians reported increased use of AI to support clinical documentation, with 36% using AI for administrative workflows such as scheduling and claims.

- Ambient AI documentation systems have been linked to reductions in note-taking time and after-hours work, with clinicians reporting regained time and focus.

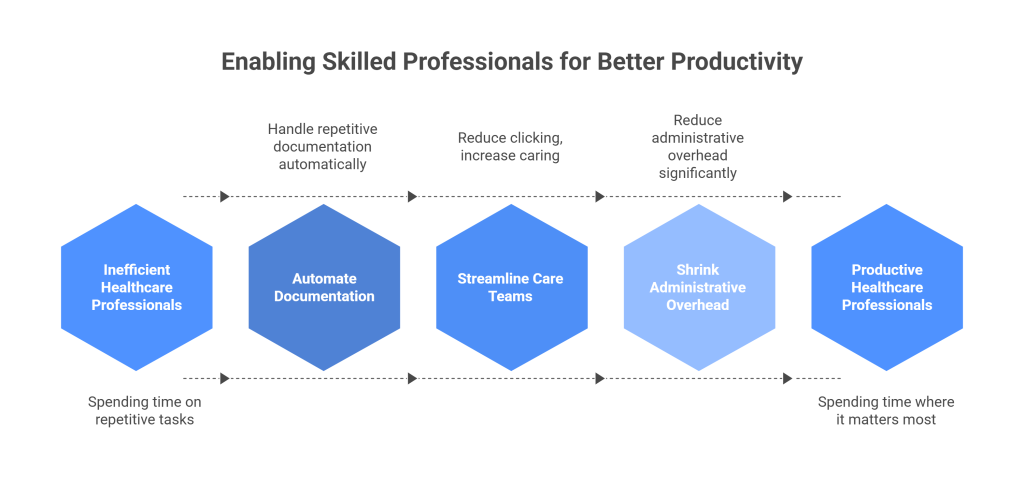

What Better Productivity Looks Like

True productivity isn’t doing more with fewer people. It’s enabling skilled professionals to spend their time where it matters most.

In healthcare, that means:

- Doctors and nurses spend more time with patients

- Automation handles repetitive documentation

- Care teams spend less time clicking and more time caring

- Administrative overhead shrinks instead of growing

Healthcare taught us a valuable lesson: technology must be human-centered to truly be productive. When it isn’t, it creates new work instead of reducing old work.