The NIST AI Risk Management Framework is a guide that helps organizations spot and reduce risks in AI systems. This framework was released in January 2023 by the U.S. National Institute of Standards and Technology. The framework is built around four key steps, namely: Govern, Map, Measure, and Manage, and is meant to help teams responsibly use AI. It doesn’t matter which industry you work in or which AI you use; this framework works everywhere. Instead of telling which tools to use, it actually shows what to think about and when to do so.

Key Takeaways

- The NIST AI RMF has four functions: Govern, Map, Measure, and Manage.

- Voluntary framework, but regulators are referencing it more each year.

- A GenAI Profile was added in July 2024, specifically for LLMs and agents.

- 77% of organizations are actively building AI governance programs as of 2025.

- Only 36% have adopted a formal framework like NIST AI RMF.

- Protecto’s Privacy Vault and CBAC directly support the framework’s core functions.

Why Does This Framework Even Exist?

Most AI teams are moving fast. They’re shipping models, connecting agents to databases, letting outputs feed into real business decisions. But ask who owns the risk when something goes wrong, and the answer gets fuzzy fast.

That’s not a people problem. It’s a structural problem. And that’s exactly what the NIST AI Risk Management Framework is designed to address.

NIST released AI RMF 1.0 on January 26, 2023, after years of workshops and input from industry experts. The goal wasn’t to add another layer of compliance. It was to give organizations a common way to understand and manage AI risk, whether you’re a hospital, a bank, or a software company.

It’s not a checklist. There’s no pass or fail. Think of it as a thinking structure that forces the right conversations before something breaks, rather than after.

And while it’s technically voluntary, that word is doing less work every year. Colorado’s AI Act now gives organizations a legal affirmative defense, but only if they can show alignment with the NIST AI risk management framework or ISO 42001. Regulators at the FDA, SEC, and CFPB are referencing it more often. It’s drifting from “best practice” toward “expected baseline” faster than most teams realize.

The Data Makes the Case

According to the EY Responsible AI Pulse Survey of 975 C-suite leaders across 21 countries, 99% of organizations reported financial losses from AI-related risks. Nearly two-thirds lost more than $1 million. The survey ran in August-September 2025. (Most recent data available)

That’s not a projection. It’s already happened, across every major industry.

The IAPP AI Governance Profession Report 2025 found 77% of organizations are building or refining AI governance programs. But only 36% have adopted a formal framework like NIST AI RMF. The intent is there. The execution is lagging.

99% of organizations in a 2025 EY global survey of 975 C-suite leaders reported financial losses from AI-related risks, with nearly two-thirds losing more than $1 million.

Breaking Down the Four Core Functions

The NIST AI RMF is organized around four functions. They’re not a linear process you complete once. They’re meant to run together, continuously, throughout the life of any AI system you build or deploy.

GOVERN: Who Actually Owns This?

Governance is the part teams often skip, or handle with a policy document nobody reads. But GOVERN is asking something more specific:

- Who makes the call when a model behaves unexpectedly?

- Who decides if it’s safe to ship?

- Who’s accountable when an AI agent accesses data it shouldn’t have?

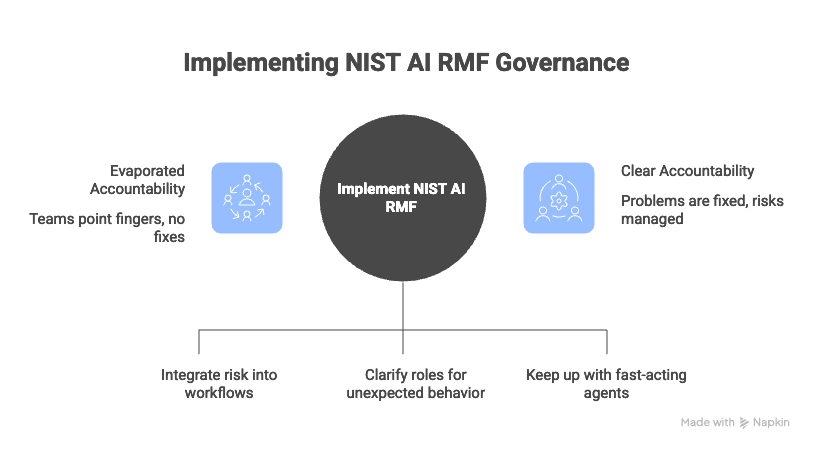

Without real answers, accountability evaporates. Teams point at each other. Nobody fixes the actual problem.

This function pushes organizations to embed risk decisions into actual workflows, not just committees that meet quarterly. For teams running multi-agent AI systems, this matters even more. Agents act fast. Your governance structure needs to keep up.

MAP: Do You Know What You’ve Built?

Before you can assess risk, you need a clear picture of what you’re running. MAP asks organizations to document their AI systems properly: what does each one do, who does it affect, what data flows through it, and where does human judgment come in?

Most regulators now expect a live AI inventory. Not a spreadsheet from two years ago, but a system that stays updated. It should clearly capture things like model purpose, data sources, risk exposure, and how each system is being monitored. Teams often discover things during this step that genuinely surprise them. Sensitive data showing up in systems that were never designed to handle it. That’s what the MAP function is meant to do. It brings these gaps to the surface before they turn into real problems.

MEASURE: Are Risks You Identified Actually Showing Up?

Knowing a risk exists is one thing. Checking if it’s actually happening is another. That’s where MEASURE comes in. This step is about using real data, not assumptions. Teams test for bias, check performance, watch for model drift, and review third-party models. The March 2025 update puts more focus on where the model comes from and how clean the data is. Why this matters: most teams are using AI they didn’t build themselves. They can’t fully see how it works, so they need stronger checks in place.

For teams running Secure RAG pipelines or connecting models to vector databases, this function maps directly to monitoring what enters the retrieval layer, and what the model does with it afterward.

MANAGE: What Happens When Things Break?

Every risk program eventually gets tested by something going wrong. MANAGE is about being ready for that before it happens.

This function covers mitigation strategies, incident response, and continuous monitoring. It’s genuinely ongoing work, not a box you check during implementation. As models update, data shifts, and use cases expand, the risk profile of an AI system changes. Your response capability needs to keep up.

The gap here is significant. A 2025 Pacific AI Governance Survey of 351 organizations found that fewer than 20% have dedicated AI incident reporting tools in place, even in heavily regulated industries like healthcare and finance.

That means most companies have identified their risks but haven’t built the infrastructure to respond when those risks materialize.

What the GenAI Profile Added in 2024

The original AI RMF launched before large language models and autonomous agents became mainstream enterprise tools. NIST recognized the gap fairly quickly.

In July 2024, they published NIST-AI-600-1, the Generative AI Profile. It extends the core framework with 12 risks specific to generative systems: hallucination, data poisoning, prompt injection, intellectual property concerns, over-reliance, and more. Each risk maps back to the four original functions, so organizations don’t have to build a separate framework. They layer the GenAI Profile on top of what they already have.

For enterprises deploying agents that access sensitive data, whether that’s patient records, financial data, or internal communications, this profile covers the risks that traditional AI data privacy and compliance approaches weren’t designed to handle.

What Alignment Actually Looks Like in Practice

A leading bank in the Middle East needed to deploy Google Gemini internally. Under strict PDPL data sovereignty laws, no customer data could leave the country or reach public cloud infrastructure. There was no workaround.

The bank used Protecto’s Privacy Vault to make it work. Real-time tokenization at data ingestion. Role-based access enforced at inference. Every interaction logged in a complete audit trail. Full PDPL compliance in under three weeks, with 99%+ PII and PHI detection accuracy and zero data leaving their infrastructure. Full case study here.

That deployment covers GOVERN, MEASURE, and MANAGE from the NIST AI risk management framework directly. Not as a side effect. By design.

A Fortune 100 enterprise used Protecto’s Context-Based Access Control across multi-agent workflows. The result was enforced, role-specific access for over 50,000 users, with agents only reaching data their context permitted. Read that case study here.

Is “Voluntary” Still a Meaningful Word?

Technically yes. Practically, the answer gets more complicated each year.

The White House AI Action Plan released in July 2025 named NIST in a significant number of recommended policy actions. The EU AI Act is in phased enforcement heading into 2026, and NIST AI RMF has become the operational layer most companies use for EU AI Act readiness. The OECD, G7, and ISO/IEC Working Group 42 all map to NIST AI RMF principles.

For organizations in healthcare, financial services, or government contracting, demonstrating alignment with the NIST AI risk management framework is showing up as a procurement requirement. It’s not just about regulatory risk. It’s about being taken seriously as a vendor in regulated industries.

Frequently Asked Questions

What is the NIST AI risk management framework in plain terms?

It’s a structured guide published by the U.S. government to help organizations manage risks from building and deploying AI. It’s built around four functions: Govern, Map, Measure, and Manage. It’s voluntary, works across any industry, and doesn’t tell you which tools to use. It gives you a structure for asking the right questions throughout the AI lifecycle.

Is the NIST AI RMF mandatory?

Not legally for most private-sector companies. But Colorado’s AI Act references it directly as a basis for legal defense, and regulators in healthcare, finance, and government increasingly treat it as a compliance benchmark. For regulated industries, ignoring it is becoming a harder position to justify.

How does the NIST AI risk management framework apply to generative AI?

NIST published the GenAI Profile in July 2024 specifically to address LLMs and generative systems. It covers 12 risks unique to these systems, including hallucination, prompt injection, and data poisoning, all mapped back to the four core functions of the original NIST AI RMF.

What is the difference between NIST AI RMF and ISO 42001?

The NIST AI RMF focuses specifically on identifying and reducing AI-related risks across the AI lifecycle. ISO 42001 is a broader management system standard covering how an organization establishes, operates, and improves its overall AI governance program. They work well together, and many enterprises pursue alignment with both simultaneously.