Artificial Intelligence is not simply limited to research labs. Businesses across industries now use AI to automate decisions, analyze data, and build intelligent products. However, before implementing AI systems, organizations must decide where the AI models will run.

This raises an important question: Should AI systems be deployed on-premises or in the cloud? Deciding between cloud AI inference and on-premises deployment is not just about cost. It is mostly about safety.

In this guide, we will look at the differences between cloud vs on-premise AI model deployment to help you decide which one is safer for your data.

Understanding AI Deployment Models

When deciding between on-premises AI vs. cloud AI, it is important to understand how these two deployment models work. Let us look at both the models.

What Is On-Premise AI?

On-premises AI refers to deploying AI infrastructure within a company’s data center or on private servers. The organization manages the hardware, storage, networking, and security.

This means the AI models, training data, and inference pipelines remain within internal infrastructure. In fact, several sectors, such as finance, healthcare, and government, prefer on-premises AI because they handle sensitive or regulated data.

Common characteristics include:

- Full control over infrastructure and data

- AI models hosted on internal servers

- Custom security configurations

- Limited reliance on third-party providers

What Is Cloud AI?

Cloud AI refers to deploying AI systems on infrastructure provided by cloud platforms such as AWS, Google Cloud, or Microsoft Azure. Instead of owning hardware, companies access computing resources through the internet.

Cloud AI is widely used because it reduces infrastructure costs and simplifies AI development. Key features include:

- AI infrastructure managed by cloud providers

- Flexible computing power

- Fast scaling for training or inference

- Integrated machine learning services

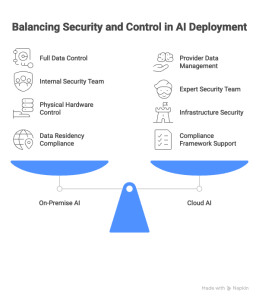

Key Security Differences Between On-Prem AI and Cloud AI

Understanding the cloud vs on-premise AI model deployment differences in the United States helps organizations make informed decisions about risk and security.

1. Data Control and Ownership

In an on-premise setup, you are the boss. You decide who can enter the server room. You decide which firewall to use. For companies handling highly sensitive information, this feels safer.

Because the data never leaves the building, there is less chance of someone “sniffing” the data while it travels over the internet.

2. Expert Security

Cloud AI providers spend billions of dollars on security. They have thousands of experts watching for hackers at all times. Most small or medium businesses cannot afford that many experts.

This is one of the major differences between cloud AI inference and on-premises deployment. In the cloud, you get “Bank-level” security, but you have to trust the provider.

3. Infrastructure Protection

AI models now majorly rely on GPUs, storage systems, and large-scale computing infrastructure.

On-premise deployments seamlessly allow organizations to:

- Physically control hardware

- Monitor internal networks

- Apply custom security policies

Cloud platforms, however, invest heavily in infrastructure security. In fact, Gartner predicts that more than 70% of the industry will use cloud platforms by 2027. This is possible as cloud environments may actually offer stronger baseline infrastructure protection.

4. Compliance and Regulations

Regulatory compliance is another major factor in the cloud AI inference vs. on-premises deployment differences. Industries such as healthcare, finance, and defense must follow strict compliance standards.

On-premise deployments may help organizations meet requirements related to:

- Data residency

- Internal audit controls

- Restricted network environments

However, many cloud providers now support compliance frameworks, including:

- HIPAA

- SOC 2

- ISO 27001

- FedRAMP in the United States

Organizations must evaluate whether regulatory requirements allow cloud deployments.

Cloud AI Inference vs On-Premise Deployment Differences

Inference simply means the moment the AI makes a prediction or answers a question.

When we talk about cloud AI inference vs on-premise deployment differences, speed and safety are the main topics.

- Cloud Inference: It is very fast because the cloud has massive power. But your data must travel to the cloud and back. This “travel time” is a moment when hackers might try to attack.

- On-Premise Inference: It happens locally. It might be slower if your computers are old, but the data stays behind your own walls.

Many companies are now using data tokenisation to solve this. Tokenization replaces highly sensitive data with a “token” or a placeholder. Even if a hacker steals the token during cloud inference, they cannot read the real information. This makes the cloud much safer for AI.

Is One Model Truly “Safer”?

There is no simple answer to the differences between cloud vs on-premises AI model deployment in the United States.

If you are a giant bank with a huge budget for security guards and IT experts, AI on-premise vs. cloud usually tips toward on-premise. You have total “Physical” and “Digital” control.

If you are a growing company that wants the best tools without building a data center, Cloud AI is likely safer because the providers (like AWS or Azure) have better defenses than you could build yourself.

How to Choose for Your Business?

To decide on cloud AI inference vs on-premise deployment differences, ask yourself these three questions:

- How sensitive is my data? If you have government secrets, go on-premise. If you have customer emails, the cloud is fine with data tokenisation.

- Do I have an IT team? If you don’t have experts to patch and update servers every day, on-premise is actually less safe. The cloud updates itself.

- What are the laws? Check the rules in the United States for your specific industry. Some laws require on-premise storage.

The Hybrid Approach: The Best of Both Worlds?

Many smart companies do not choose just one. They use a “Hybrid” model. They keep the most sensitive data on-premises and use the cloud for large-scale tasks that don’t require private information.

When comparing AI on-premise vs. cloud, the hybrid model uses an AI Data governance framework to move data safely back and forth. This way, you get the power of the cloud and the locked-door safety of on-premises.

Conclusion

The debate over AI on-prem vs. in the cloud will continue as technology improves. Currently, the “safest” model is the one that you can manage best.

If you use the cloud, make sure to use tools such as data tokenisation and Agentic data classification. These tools act like a shield for your information. If you go on-premises, make sure you have a physical security plan and a way to keep your software up to date against new viruses.

Understanding the cloud AI inference vs on-premise deployment differences is the first step toward a smart AI strategy. In the United States, where data privacy is becoming a huge deal, taking the time to pick the right model is worth the effort.

Safety is not just about where the server sits. It is about the rules you set, the tools you use to hide data, and the people you trust to manage it. Whether it is cloud vs on-premise AI model deployment differences in the United States, your goal should always be to keep your customers’ trust while using the amazing power of AI.

Frequently Asked Questions

What are the cloud AI inference vs on-premise deployment differences?

The major cloud AI inference vs on-premise deployment differences include scalability, data privacy, infrastructure control, and how AI models process predictions in production environments.

How does infrastructure control differ in AI on-prem vs. cloud?

In AI on-premise vs. cloud, on-premise deployments allow organizations to fully control servers, hardware, and networks, while cloud deployments rely on infrastructure managed by cloud providers.

What scalability benefits exist in cloud AI inference vs on-premise deployment differences?

A major advantage of cloud AI inference over on-premises deployment is that cloud platforms can scale computing power instantly to support large AI workloads.

How should organizations choose between AI on-premise and cloud deployment?

Organizations need to consider factors like the sensitivity level of the data, core compliance requirements of the organization, infrastructure costs, and scalability needs when deciding between AI on-premises vs cloud deployment models.