Protecto, a leader in data security and privacy solutions, is excited to announce its latest capabilities designed to protect sensitive enterprise data, such as PII and PHI, and block toxic content, such as insults and threats within Databricks environments. This enhancement is pivotal for organizations relying on Databricks to develop the next generation of Generative AI (Gen AI)applications.

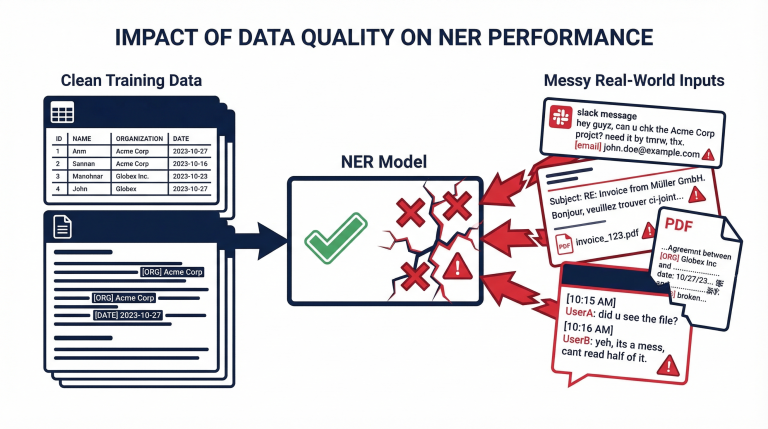

Data security, privacy, and safety concerns have been significant roadblocks for Gen AI initiatives. Protecto addresses these challenges head-on with APIs that manage structured and unstructured data, mitigating data security and privacy risks across context data, LLM (Large Language Model) responses, and user prompts. This comprehensive approach ensures enterprises can innovate confidently, knowing their sensitive information is safeguarded.

A key differentiator of Protecto’s solution is its unique technology, which maintains data understandability by LLMs even after masking sensitive PHI (Protected Health Information) data. Protecto’s solution ensures that Gen AI applications remain secure and compliant without compromising accuracy. Protecto’s integration with Databricks is seamless, offering native connectors to read and securely use data within the platform.

For companies operating in regulated industries, Gen AI applications’ security and privacy controls often need to be more mature to meet stringent compliance requirements. Protecto ensures that innovation remains secure and free from compliance risks. Protecto’s robust security and privacy controls provide the necessary safeguards to protect sensitive data, enabling organizations to focus on innovation without worrying about regulatory issues.

“At Protecto, we understand the paramount importance of data security and privacy in the development of Gen AI applications,” emphasized Baskaran Alagarsamy, CTO of Protecto. “Our latest offerings protect sensitive data and ensure that applications remain accurate and compliant, a crucial aspect for regulated industries such as banking and healthcare. We proudly support our customers, supporting their secure and efficient innovation.”

For more information about Protecto and its new data security and safety guardrails for Gen AI apps in Databricks, please visit www.protecto.ai.

AboutProtecto.ai

Protecto (www.protecto.ai)is a leading provider of data security and privacy solutions, specializing in safeguarding sensitive information across various GenAI applications. Its cutting-edge, AI-driven solutions empower organizations to secure their data, ensure compliance, and foster safe innovation with Generative AI while preserving the accuracy of large language models (LLMs).