Ally Financial Partners with LangChain to Launch Revolutionary PII Masking Module on Ally.ai Platform

Ally Financial, the largest digital-only bank in the US and a prominent auto lender has joined forces with LangChain to unveil a groundbreaking PII (Personal Identifying Information) Masking module designed to address a critical challenge faced by AI developers dealing with sensitive customer data. This collaborative effort marks a significant step in ensuring compliant and secure handling of personal information in highly regulated fields, including finance, healthcare, and retail.

The Genesis of the PII Masking Module

The PII Masking module, accessible [here](#), is a product of Ally Financial’s cloud-based AI platform, Ally.ai. Launched in September 2023, Ally.ai is a secure interface connecting Ally’s data with commercially available LLMs (Large Language Models). The platform, which has already demonstrated its prowess, assists over 700 customer care associates in summarizing conversations with customers, making it a valuable tool in financial services.

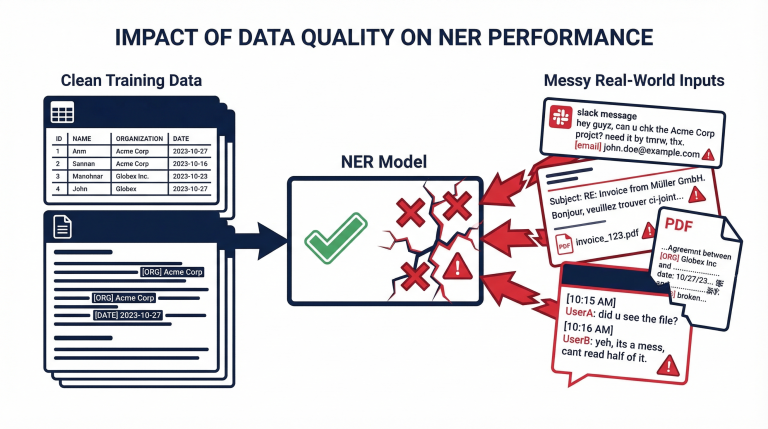

Ally’s developers, supported by LangChain, introduced the PII Masking module to tackle the crucial issue of safeguarding PII during the summarization process by LLMs. This innovative module masks various forms of PII, including names, email addresses, and account numbers, providing a starting point for organizations handling customer PII to develop generative AI applications that prioritize data protection.

Impact on Efficiency and Business Processes

The implementation of Ally.ai, fortified with the PII Masking module, has yielded impressive results for Ally Financial. The platform has saved between 30 seconds and two minutes per customer call, enabling associates to expedite customer call summaries. Remarkably, over 85% of call summaries generated by Ally.ai require no additional edits from associates, underscoring the platform’s strength and efficiency.

Associates have reported that Ally.ai’s summarizations have allowed them to concentrate on meaningful customer interactions, reducing the time needed to document conversations and switch between screens. The success of Ally.ai has prompted the Ally team to explore nearly 200 AI use cases throughout the enterprise.

Contributions to LangChain and Open Source Initiative

In navigating the highly regulated banking sector, Ally prioritizes security and compliance. To meet these stringent requirements, Ally’s developers integrated PII filtering into the LangChain code, ensuring no sensitive data is transmitted to LLMs. This two-way communication with the LLM before and after data sharing has resulted in a robust PII Masking module.

The success of Ally’s approach to PII filtering has led them to contribute their code back to the LangChain community. The open-sourced code, available in the langchain-community package, aims to benefit other financial institutions and companies operating in regulated industries. This collaborative spirit emphasizes Ally’s commitment to responsible AI practices.

The Road Ahead for Ally Financial and LangChain

Encouraged by Ally.ai’s initial success, the Ally team is pursuing almost 200 AI use cases, exploring opportunities to optimize employee productivity and enhance business processes. While not all use cases may reach production, Ally’s commitment to leveraging AI capabilities responsibly remains steadfast.

Ally Financial’s collaboration with LangChain is a testament to the transformative potential of responsible AI practices. As the developer community continues to evolve, Ally’s contribution and collaboration with LangChain set a positive example for organizations aiming to harness AI’s power to benefit consumers and the community.

C3 Voice Assistant: Revolutionizing Accessibility in AI with LlamaIndex and GPT-3.5

In the ever-evolving landscape of artificial intelligence, accessibility has taken center stage with the launch of the C3 Voice Assistant, a groundbreaking project spearheaded by its creator. This voice-activated assistant aims to enhance accessibility for a diverse audience, particularly those facing typing challenges or other accessibility issues. Developed with a robust tech stack, the C3 Voice Assistant combines Large Language Models (LLM) and Retrieval-Augmented Generation (RAG) to deliver a seamless and user-friendly experience.

Key Features of the C3 Voice Assistant

- Voice Activation: The C3 Voice Assistant introduces an innovative voice activation feature initiated by the wake word “C3.” Alternatively, users can click a blue ring to activate the listening mode of the app. The wake word is configurable, allowing users to choose a preferred activation term.

- Universal Accessibility: Tailored for users who prefer voice commands or encounter typing challenges, the C3 Voice Assistant aims to provide a universally accessible interface.

- LLM Integration: With the capability to handle general queries and document-specific inquiries, such as Nvidia’s FY 2023 10K report, the assistant showcases the integration of powerful Large Language Models.

- User-Friendly Interface: The interface prioritizes simplicity and ease of use, focusing on voice chat interactions. Developed using React.js, the layout is minimalistic and features a convenient sidebar displaying the entire chat history in text format.

Tech Stack Overview

The C3 Voice Assistant is built on a versatile tech stack to ensure a smooth and efficient user experience.

- Frontend: The user interface is a custom application developed using React.js, emphasizing a minimalistic and highly functional design for accessibility.

- Backend: Python Flask powers the server-side operations, utilizing the innovative ‘create-llama’ feature from LlamaIndex to streamline development.

- Hosting: The frontend is hosted on Vercel, while the backend is deployed on Render, ensuring optimal performance and efficient server-side task management.

The Frontend, developed with React.js, focuses on user interaction and accessibility, incorporating several vital components.

Setting up the backend involves initializing Create-Llama, creating a Python FastAPI backend, and configuring the environment. The server supports CORS for all origins, making it adaptable to different environments.

Integration

Integrating the backend with the frontend is straightforward. To call the backend server URL, you need to update the fetchResponseFromLLM function in App.js.

Final Thoughts

The C3 Voice Assistant transcends being a mere tech showcase; it represents a significant stride toward democratizing AI. By making powerful AI tools accessible and user-friendly, the project breaks down tech barriers, empowering a wider audience. Beyond lines of code, the C3 Voice Assistant is a glowing testament to the transformative potential of AI in fostering inclusivity and accessibility.