In the rapidly evolving landscape of modern organizations, the pervasiveness of artificial intelligence (AI) has become a defining characteristic. As AI technologies become integral to various business processes, the imperative to ensure robust AI security has grown exponentially.

Here, we explore the multifaceted aspects of AI security, delving into its significance and addressing the unique challenges organizations face in safeguarding their AI systems. Understanding the intricate interplay between AI and safety is essential in navigating the complex digital terrain where the potential benefits of AI come hand-in-hand with the responsibility to mitigate security risks.

Understanding AI Security

AI security is a dynamic and intricate domain encompassing safeguarding artificial intelligence systems against a spectrum of threats and risks.

At its core, AI security involves protective measures to ensure the availability, integrity, and confidentiality of AI systems. It spans the entirety of AI, from machine learning algorithms to complex neural networks, aiming to fortify these technologies against malicious exploits.

The landscape of AI security is fraught with diverse threats and risks. These can range from adversarial attacks seeking to manipulate AI models to vulnerabilities in the algorithms themselves, posing challenges in maintaining the reliability and trustworthiness of AI systems.

Unlike traditional software systems, AI introduces unique challenges due to its self-learning capabilities and complexity. The iterative nature of machine learning and dependence on vast datasets demands specialized security measures to mitigate risks effectively.

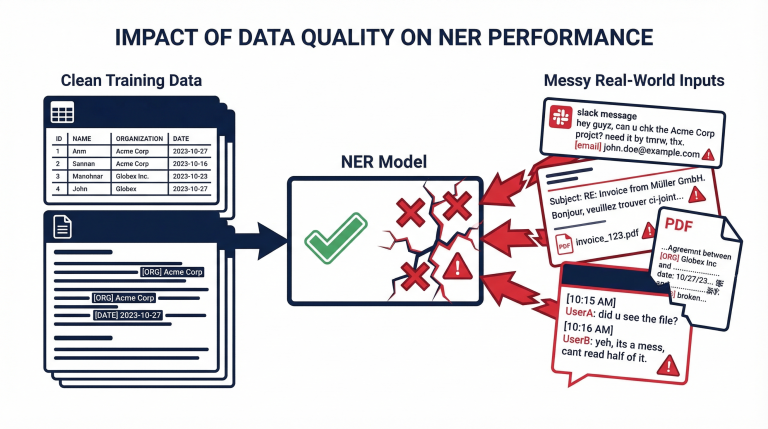

Data Security in AI Systems

Ensuring robust data security within AI systems is paramount to safeguarding sensitive information and maintaining the integrity of AI processes.

Ensuring Confidentiality of Training Data

The cornerstone of data security in AI lies in preserving the confidentiality of training data. Encryption emerges as a foundational practice, providing a shield against unauthorized access. Secure storage protocols further fortify data repositories, minimizing the risk of data breaches.

- Encryption and Secure Storage: Implementing robust encryption mechanisms is a formidable barrier, rendering data indecipherable to unauthorized entities. Concurrently, secure storage solutions bolster data protection, preventing unauthorized extraction or tampering.

- Access Control Mechanisms: Implementing stringent access control mechanisms is crucial beyond encryption. Organizations can thwart unauthorized attempts and fortify the confidentiality of their AI training data by delineating and restricting data access based on predefined roles and permissions.

Addressing Privacy Concerns in AI Data Usage

Privacy concerns loom large in the realm of AI data usage. Anonymization and pseudonymization emerge as pivotal techniques, offering a delicate balance between data utility and individual privacy.

- Anonymization and Pseudonymization: Anonymizing and pseudonymizing data involves stripping or altering personally identifiable information (PII) to prevent direct association with individuals. This practice aligns with privacy regulations, allowing organizations to leverage data for AI training while mitigating the risk of privacy infringements.

- Compliance with Data Protection Regulations: As custodians of sensitive information, organizations must navigate a complex web of data protection regulations. Aligning AI data practices with these regulations ensures legal compliance and fosters a culture of responsible and ethical AI usage.

Model Security and Robustness

Securing the models themselves is a critical dimension of comprehensive AI security, requiring a proactive approach to development practices and resilience against adversarial threats.

Secure Model Development Practices

The journey to model security begins in the development phase, necessitating a meticulous adherence to secure coding standards and robust architecture.

- Code Review and Secure Coding Standards: Rigorous code reviews and adherence to secure coding standards form the bedrock of secure model development. Regular scrutiny ensures the identification and elimination of vulnerabilities, minimizing the risk of exploitation.

- Implementing Secure Model Architecture: The architecture of an AI model plays a pivotal role in its security. Implementing secure design principles involves minimizing attack surfaces, employing proper authentication mechanisms, and incorporating fail-safe measures to enhance resilience against potential threats.

Adversarial Attacks and Defenses

Acknowledging the susceptibility of AI models to adversarial attacks, organizations must implement defenses beyond conventional security measures.

- Understanding Adversarial Threats: Adversarial threats capitalize on vulnerabilities in AI models, manipulating inputs to induce misclassification or erroneous outputs. Understanding the nuances of these threats is fundamental to developing effective defense strategies.

- Implementing Robustness Measures: Building model robustness involves deploying techniques to withstand adversarial attempts. This may include incorporating adversarial training, where models are exposed to formulated negative examples during training to enhance their resilience.

By emphasizing secure development practices and robustness against adversarial threats, organizations can fortify their AI models against potential exploits, thereby enhancing the overall security posture of AI systems.

Secure Deployment of AI Models

The secure deployment of AI models is a pivotal phase, ensuring that the resilience cultivated during development translates seamlessly into real-world applications.

The environment in which an AI model operates significantly influences its security. Organizations must adopt measures to guarantee the security of deployment environments.

- Containerization and Virtualization Best Practices: Leveraging containerization and virtualization techniques provide isolated environments, reducing the impact of potential security breaches. Organizations create a portable and secure deployment ecosystem by encapsulating the AI model and its dependencies.

- Securing Cloud-based AI Services: As cloud services become integral to AI deployment, ensuring the security of cloud environments is imperative. Employing encryption, strict access controls, and continuous monitoring mitigates potential risks associated with cloud-based AI services.

In navigating the deployment landscape, organizations must consider the internal security of AI models and the broader context in which these models operate.

Access Controls and Authentication

Effectively managing access to AI systems is critical to overall security, demanding robust controls and authentication measures to safeguard against unauthorized usage.

Instituting role-based access controls (RBAC) is fundamental in orchestrating a secure AI environment. RBAC ensures that individuals within an organization are granted access based on their roles, limiting permissions to the minimum necessary for their responsibilities.

- Multi-Factor Authentication for AI Systems: Elevating security, especially in user interactions with AI systems, involves implementing multi-factor authentication (MFA). By forcing users to provide multiple forms of identification, such as passwords and biometrics, organizations add a layer of defense against unauthorized access.

- Securing APIs and Communication Channels: As AI systems often rely on APIs for interaction, securing these communication channels is paramount. Encryption protocols, such as HTTPS, safeguard data transmission, preventing eavesdropping or data interception.

In the ever-evolving cybersecurity landscape, adequate access controls and authentication mechanisms are the first defense against potential breaches.

Vendor and Supply Chain Security

Ensuring the security of AI systems extends beyond organizational boundaries, requiring a diligent approach to evaluating and fortifying relationships with vendors and managing the complexities of the AI supply chain.

Collaborations with external vendors introduce additional considerations in AI security. Organizations must meticulously assess the security postures of their vendors, ensuring alignment with stringent security standards.

- Securing the AI Supply Chain: Understanding the intricacies of the AI supply chain is pivotal. From data acquisition to model development tools, every component introduces potential vulnerabilities. A comprehensive approach involves vetting and validating each link in the supply chain to minimize risks.

- Best Practices for Third-Party Risk Management: Implementing robust third-party risk management practices is imperative. This includes conducting thorough security assessments of vendors, establishing clear security expectations through contracts, and maintaining ongoing oversight to address emerging risks.

As organizations increasingly rely on external entities for various components of their AI ecosystem, adopting proactive measures in evaluating, securing, and managing vendor relationships and the broader AI supply chain is crucial.

Employee Training and Awareness

Recognizing employees’ pivotal role in maintaining AI security, organizations must prioritize comprehensive training programs and foster a culture of heightened awareness to mitigate human-related risks.

Employees are both contributors to and potential vulnerabilities in AI security. Acknowledging the human element is critical, as inadvertent actions or oversights can impact security posture.

- Training Employees on AI Security Best Practices: Providing targeted training programs equips employees with the knowledge and skills necessary to navigate AI security challenges. This includes understanding the importance of secure coding practices, recognizing social engineering attempts, and adhering to established security protocols.

- Creating a Culture of Security Awareness: Establishing a culture of security awareness is fundamental. Organizations should foster an environment where employees prioritize security, report potential threats promptly, and actively participate in maintaining the security integrity of AI systems.

Investing correctly in employee training and encouraging a culture of security awareness allows organizations to fortify their defenses against internal threats, ultimately enhancing the overall resilience of their AI security framework.

Final Thoughts

In navigating the intricate landscape of AI security, organizations find themselves at the forefront of a continuous and adaptive journey. Leadership is pivotal in shaping this journey and instilling a proactive approach to AI security.

Leaders must champion the cause of AI security, advocating for integrating security considerations at every phase of AI development, deployment, and maintenance.

AI security is not a static endeavor but a dynamic, ever-evolving process. Leaders must emphasize the need for continuous adaptation to emerging threats, ensuring that security measures remain robust and effective.

Proactivity is vital to AI security. Leaders should foster a proactive mindset among teams, encouraging the identification and mitigation of potential risks before they materialize into security incidents.

As organizations propel into an AI-driven future, the conclusion underscores the critical role of leadership in steering the course of AI security, embracing its dynamic nature, and championing a proactive stance to safeguard the integrity of AI systems.