Protecto.ai Ensures Compliance with India Data Sovereignty

India’s data protection laws are evolving to safeguard the privacy of its citizens. One crucial aspect is the requirement that Personally Identifiable Information (PII) remain within India’s borders for processing. This data residency requirement poses a challenge for businesses that want to leverage powerful AI language models (LLMs) like those offered by OpenAI, which often process data in global centers.

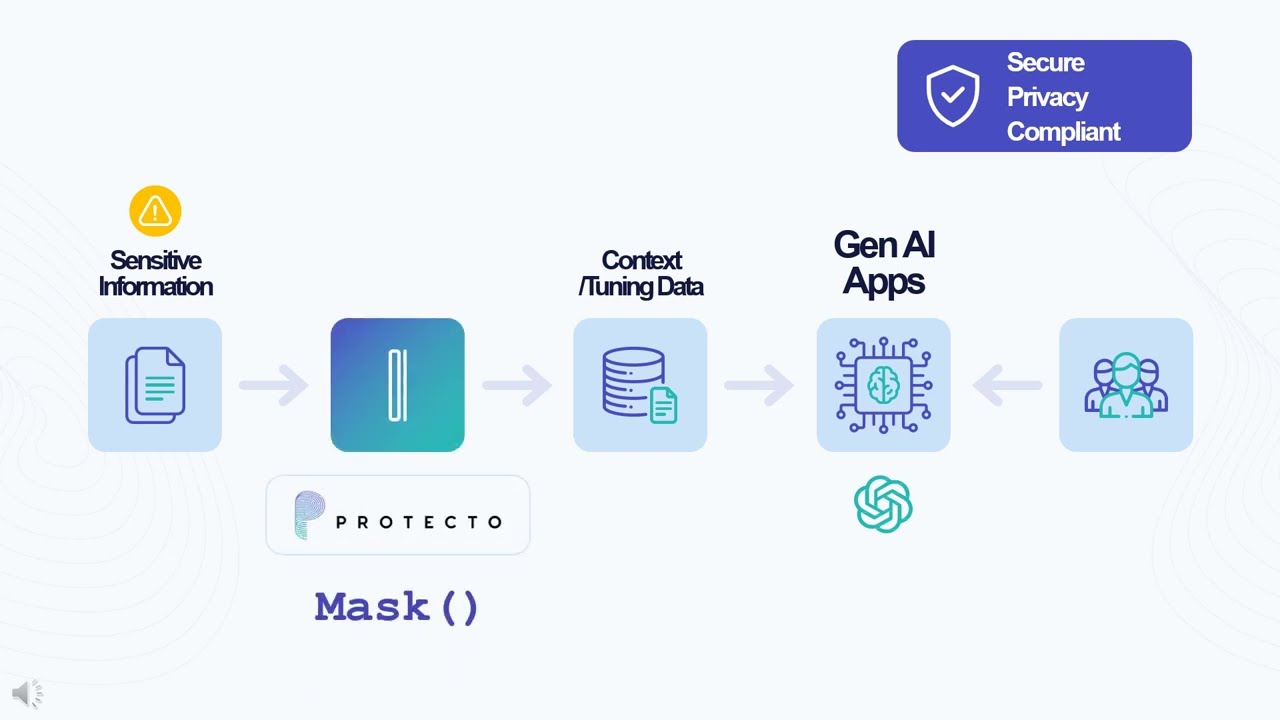

The Protecto.ai Solution: Pseudonymization for Data Sovereignty

Protecto.ai offers an elegant solution to this problem through pseudonymization.

Protecto – Data Protection for Gen AI Applications. Embrace AI confidently! (youtube.com)

Here’s how it works:

- Intelligent Tokenization: Before sending your data to OpenAI’s LLM, Protecto.ai strategically replaces sensitive PII with secure tokens. These tokens aren’t random; they’re designed to preserve your data’s format, consistency, and context. Our advanced masking is crucial for maintaining LLM accuracy.

- Process Freely: Your pseudonymized data can now be safely processed by OpenAI’s models without compromising India’s data residency requirements.

- Reversing the Process: When the LLM generates responses, Protecto.ai seamlessly replaces the tokens with the original PII values, restoring the insights to their full context.

Why Protecto.ai Is the Superior Choice

- Data Value Preservation: Protecto.ai’s unique tokenization approach goes beyond simple masking techniques. It understands the importance of maintaining data integrity for the highest LLM accuracy. Why You Can’t Use Generic Data Masking with AI?

- Deployment Flexibility: Whether your priority is to keep PII on-premise or within a cloud environment hosted in India, Protecto.ai provides options to fit your needs.

The Protecto.ai Advantage

By using Protecto.ai, you can:

- Comply with India’s data regulations: Keep your PII safe and secure within the country’s borders.

- Harness top-tier AI: Access the power of leading LLMs without sacrificing data sovereignty.

- Maximize insights: Maintain the value of your data, ensuring your AI models deliver optimal results.

If you’re working with customer data from India and want to unleash the potential of generative AI while adhering to data privacy laws, we encourage you to try Protecto.ai.

Let us know what you think! Have you faced these challenges with data residency? We’d love to hear your experiences and insights in the comments below.