Last week, OpenAI released Privacy Filter, an open-weight model for detecting and redacting PII in text. It is a thoughtful release: Apache 2.0 licensed, able to run locally, designed for high-throughput workflows, and built to go beyond regex-based detection.

This is good news for everyone building enterprise AI. Privacy at the model layer is getting real attention.

What we liked most was how clearly OpenAI described the role of the model. Privacy Filter is a redaction and data minimization aid, not a complete anonymization, compliance, or safety guarantee. It is best used as one layer in a broader privacy-by-design approach.

That is exactly right.

A strong PII detection model is one important layer. Enterprise AI needs the rest of the stack. It also needs to handle sensitive data that does not look like PII at all.

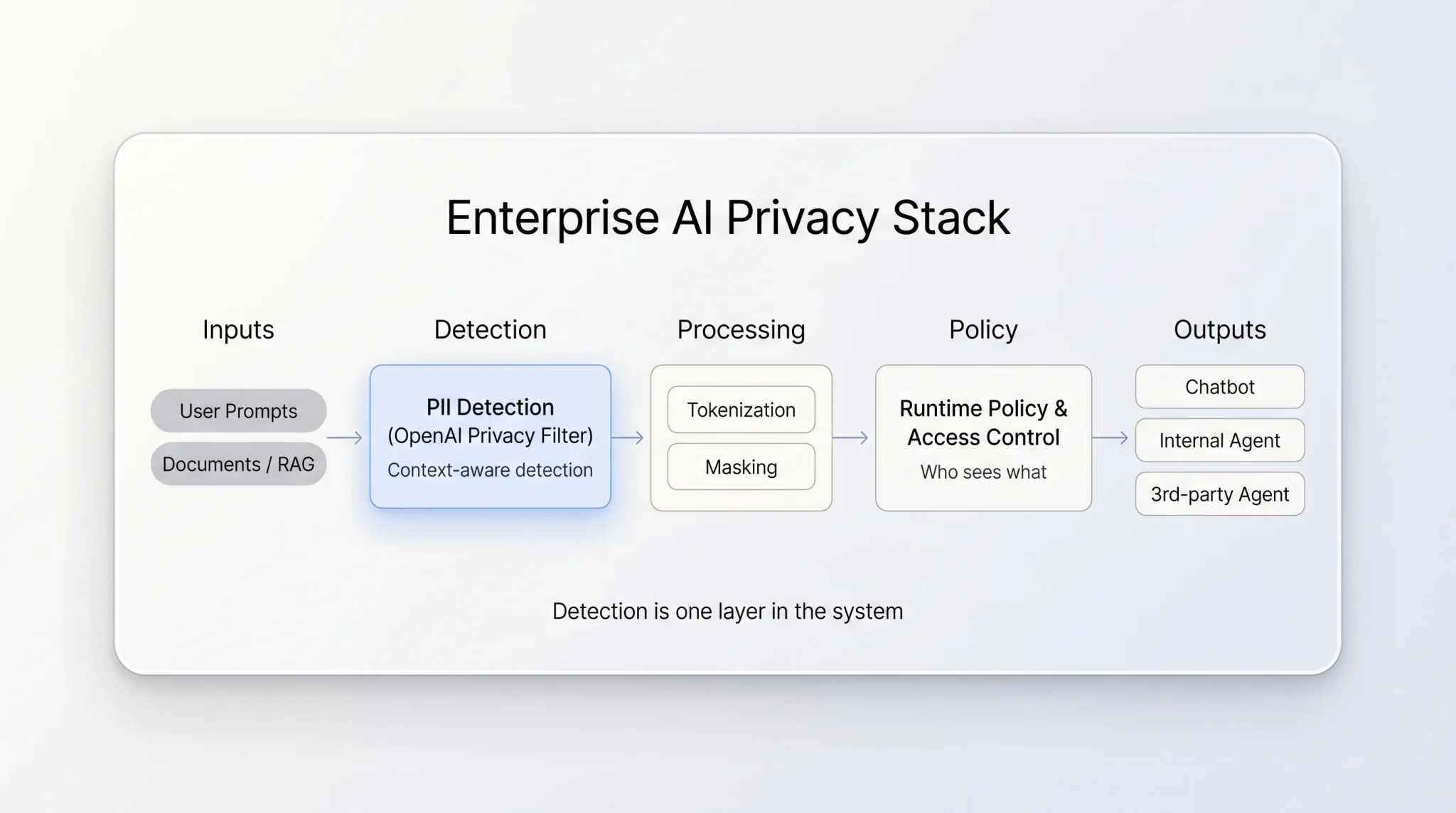

In this post, we will cover three points: PII detection is only one slice of enterprise data protection; replacing sensitive values requires context preservation so LLMs and agents can still reason; and production AI needs a full privacy system, not just a model.

We have been building that stack at Protecto for the last few years. Here is how we think about it.

Two data protection problems, not one

When companies put AI into production, two privacy problems show up. They get treated as one. They are not.

| Problem | What it looks like | What solves it |

|---|---|---|

| Handling PII safely inside AI workflows | Names, emails, account numbers in tickets, transcripts | ✓ Detection + tokenization + policy + audit |

| Protecting sensitive data that is not PII | Pricing notes, deal risks, internal instructions | ✓ Understanding the data and its context based on who is asking and why |

Privacy Filter makes meaningful progress on the first problem. There is still more to the first problem. By design, Privacy Filter is not intended to solve the second.

Let’s take them one at a time.

Problem 1: Even for PII, the model is just one piece

Detection itself has changed. The shift Privacy Filter represents, away from regex toward context-aware language models, is the right shift. Traditional NER and rule-based PII detection don’t hold up when meaning is contextual and data flows across multi-step agentic workflows. The same sentence can be sensitive in one workflow and harmless in another. A static classifier cannot tell the difference.

We saw this early and built Deepsight, our agentic detection system. Instead of running a fixed taxonomy over each input, Deepsight reasons about what a piece of text means inside the workflow it is part of. It understands the surrounding context, the role the data is playing, and the downstream use, and classifies accordingly.

Detection is the start. The hard work begins after detection.

How do you replace the value without breaking the AI?

Redaction works for humans. It breaks AI.

Take a clinical note:

"Patient John Mathew returned for follow-up with Dr. Lee. Dr. Lee recommended adjusting metformin after the prior visit."

Redact it the old way and the AI loses the thread:

"Patient [REDACTED] returned for follow-up with [REDACTED]. [REDACTED] recommended adjusting [REDACTED] after the prior visit."

The agent can no longer tell that the same doctor saw the patient across visits, or what was adjusted. The reasoning falls apart.

Tokenize it and the workflow keeps working:

"Patient <person>abcde</person> returned for follow-up with Dr.<person>ghijk</person>. Dr.<person>ghijk</person> recommended adjusting <medication>fgh45</medication> after the prior visit."

The values are protected. The structure is intact. The model can still reason. And on the way back, the right tokens are unmasked for the right user under the right policy.

Who is allowed to see the original value?

A doctor needs full patient details. A billing analyst needs only insurance fields. An external partner agent should see neither. The answer is not fixed, it depends on the user, the task, and the policy in effect right now.

OpenAI was upfront that Privacy Filter does not handle policy based masking or unmasking: “Privacy Filter does not support configuring label policies dynamically at runtime; instead changing policies requires further finetuning of the model.”

That is the right call for a model. It is not workable for an enterprise where policies change by user, by team, by region, by task.

The agent-to-agent problem

This is where most teams get caught off guard.

In a real AI workflow, you don’t have one agent. You have many. They talk to each other. They hand off work.

Picture a customer support flow with three agents:

- A frontline agent chats with the customer. It needs to see the customer’s name and account number.

- A summarizer agent takes the chat and writes a ticket for the engineering team. Engineering does not need PII to fix the bug, so this agent should strip it out.

- A vendor agent runs on a third-party platform that helps with translation. It should never see PII at all.

Same conversation. Three agents. Three different views of the data.

If your privacy layer applies a policy once at the front door, you have already lost. The frontline agent’s clean view will flow straight into the other two. You need a system that decides what each agent can see, every time data is passed, based on who that agent is and what it is doing.

This is what dynamic, runtime policy looks like. Different agents, different views, decided in real time. A detection model can flag the PII. It cannot make this decision.

The same logic applies one level up. A bank running in the US, the EU, and Singapore has three different sets of rules for the same kind of data. A healthcare network with research and clinical units has policies that conflict by design. A platform that runs all of this on shared infrastructure still has to keep each tenant’s data and policies cleanly separated. One leak across that boundary is one too many.

Can you prove what happened?

What was detected. What was masked. Who saw the original. When. Under which policy. Without a clean audit record, “we protected the data” is just a claim. Auditors and regulators do not accept claims.

Problem 2: Sensitive data goes far beyond PII

Here is the part that does not get enough airtime. Even if your PII detection is perfect, you have handled only a small slice of what enterprises actually need to protect.

Privacy Filter detects eight categories: names, addresses, emails, phone numbers, URLs, dates, account numbers, secrets. That is a clean list for PII. It is not what most enterprise leaks look like.

Read these two lines. Neither contains PII. Both are damaging if they reach the wrong person or the wrong index.

"Align the offer with the 50% max promo we discussed for Tier 1 partners."

No regex catches this. No detector flags it. And it tells anyone who reads it exactly how much room you have on price. Drop this into a RAG index that procurement or a partner agent can query, and you have handed over your discount strategy.

"This account is on a 90-day churn watch, do not raise the renewal."

No PII. Pure internal risk signal. If an agent puts this into a meeting prep document that gets shared with the customer, the renewal is dead.

There is a pattern. Sensitive data is not a list you can train a model on. It depends on three things: who is reading, what they are doing, and whether your policy lets them.

You cannot detect your way to that answer. No category list is wide enough. The control has to live one layer up, where you can look at the user, the task, and the policy together, in real time. This is what we mean by context-based access control. For most enterprises, this is where the bigger risk actually lives.

The full stack

Here is how we think about the layers around a detection model in enterprise AI. On top of all of this, the system has to run at enterprise scale: large data volumes, many concurrent users, predictable performance, and no single point of failure.

| Layer | What it does | Example |

|---|---|---|

| Detection | Finds sensitive PII entities, in context. Finds context-based sensitive data | OpenAI Privacy Filter for PII. Protecto Deepsight for both PII and context-based sensitive data |

| Tokenization | Replaces values while keeping structure | Context-preserving, reversible under policy |

| Policy at runtime | Decides what gets masked or excluded | Different rules for different users, agents |

| Access Control | Decides who or which agent can unmask, in real time | Mask for one agent, unmask for another |

| Audit | Records every detection, mask, and access | Clear trail tied to policy |

| Multi-tenancy | Isolates policies, masked values, and data across projects or regions | Departments, projects, and regions inside the same company often require different sensitivity rules and compliance controls |

| Scale and reliability | Handles enterprise data volume and traffic at scale | Sync APIs for low-latency calls, queues for bulk and async, autoscaling, high availability and disaster recovery |

| Deployment | Runs inside the customer’s environment | VPC, region-locked, on-premises |

A detection model is one row. A platform is the whole table. And for sensitive data that is not PII, the detection row does not apply at all, the rows that matter are policy and access control.

Where this leaves us

OpenAI shipping Privacy Filter is good for everyone in this space. It validates the move toward context-aware detection, which we have been investing in with Deepsight, and gives teams a strong open-weight option to start from. We expect to see it inside a lot of pipelines, including ones that work with Protecto.

What it does not do, and what OpenAI was careful to say it does not do, is solve the rest. Replacing values without breaking the AI. Deciding in real time who can see what. Carrying that decision across multiple agents, tenants, and regions. Keeping a clean audit trail. Running at enterprise scale and staying available when something fails. And the bigger question of sensitive data that no PII detector will ever catch.

That is the layer we work on. It is what turns a strong detection model into an AI workflow you can actually run in production.

Privacy is not a feature. It is the foundation that makes enterprise AI possible.