Over two-thirds of enterprises are already running agentic AI in production, according to a 2025 industry survey on the state of agentic AI security. Fewer than one in four have the visibility to know what those agents are actually doing. That gap is live right now, in systems handling customer data, financial records, and protected health information.

Most enterprises rely on security tools that never accounted for this. Firewalls, DLP policies, and role-based access controls all assume a predictable flow: a request arrives, a defined system processes it, and a response leaves. Agentic AI doesn’t work that way. An agent receives one instruction and may execute a dozen actions across multiple systems before a human sees anything. The data it touches doesn’t stay within your control perimeter.

AI agent security is the practice of controlling what agents can see and do while they are operating.

Agentic AI Security vs. Traditional Security: Key Differences

| Feature | Traditional Security | Agentic AI Security |

| Threat model | Static inputs and outputs | Autonomous multi-step actions |

| Access control point | Application boundary (RBAC) | Data layer, inside the pipeline |

| Data flow | Predictable, linear | Dynamic, across agents and tools |

| Human oversight | Present at each step | Minimal, often none |

| Primary failure mode | Unauthorized access | Uncontrolled data inside the pipeline |

| Compliance scope | Who accessed what system | What data did the agent actually see |

Figures are indicative based on published research. Confirm controls with your security team.

Why Your Existing Security Controls Break Inside an AI Pipeline

Most enterprise security is designed around one question: who is trying to access this system, and are they allowed in? That question gets answered at the authentication layer. Once an identity is verified, the controls largely step back.

AI agents expose what that architecture cannot handle. The issue isn’t who got in. It’s what the agent can see once it’s inside.

Meera works as a security engineer at a financial services firm. Her team had carefully configured RBAC across their CRM, document repository, and internal knowledge base. When they deployed an AI agent for customer support queries, every integration authenticated correctly. The agent had the access its role required.

Six weeks later, she pulled the logs. The agent had been surfacing account numbers in its summary outputs, not because anyone had misconfigured a permission, but because the data was available inside the context it was pulling from. Source-level controls had done their job. Once the data left the source and entered the agent’s reasoning loop, those controls no longer existed.

Traditional RBAC hits this wall in every agentic workflow. Access controls sit at the application boundary. Sensitive data doesn’t stay at the boundary. It moves into prompts, summaries, agent memory, and downstream agent inputs, and none of your existing policies travel with it.

The Four Threat Categories That Matter Most

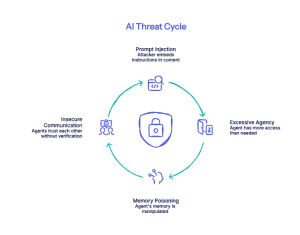

Prompt Injection

An AI agent reads content from external sources as part of its task: documents, emails, retrieved web pages, and database entries. An attacker embeds instructions inside that content. The agent treats them as legitimate task instructions and acts accordingly.

Single-session prompt injection is one problem. Multi-turn attacks are another category entirely. Research from early 2026 found success rates above ninety percent when attacks unfolded across extended conversations against commonly deployed open-weight models. Memory and tool access between turns was what made the difference.

OWASP’s Agentic AI Top 10 names goal hijacking through untrusted context as a primary failure mode in production deployments. Protecto’s mapping to those risks starts at the data layer, before the agent even reasons.

Excessive Agency and Supply Chain Compromise

You give an agent the access it needs for its task. You probably give it a little more because restricting it precisely takes time, and nobody’s testing the edge cases yet. That gap is what attackers use.

In October 2025, a widely used npm package that integrated with email systems had a single line of code quietly inserted on a version update. Every outbound email from every deployment using that version was being BCC’d to an external address. The package reached around 300 organizations before anyone pulled it. The package had looked legitimate through fifteen previous versions. Protecto’s excessive agency breakdown documents the full account of that incident.

OWASP elevated excessive agency to a top-tier vulnerability because the blast radius depends on which tools and data the agent could reach, not just how the attack got in.

Memory Poisoning

Agentic AI systems retain context across sessions. Embeddings, summaries, RAG stores. One manipulated document gets indexed into that memory. The attacker doesn’t come back.

The alert doesn’t fire. The agent keeps operating, pulling from the same poisoned context, session after session. Most queries return normal outputs. On specific inputs that match the manipulated pattern, they don’t. By the time someone pulls the logs, the drift has been running for weeks.

Insecure Agent-to-Agent Communication

Multi-agent systems pass context between agents without the scrutiny applied to human-initiated actions. One agent delegates to another. The second agent receives the request and trusts the first agent’s stated authority.

A documented healthcare scenario involved a compromised scheduling agent passing a forged request to a clinical data agent, claiming elevated permissions from a physician. The clinical agent released patient records. No alert fired. Nobody had built a verification layer for agent-to-agent requests, and nothing in the architecture required one.

What Effective AI Agent Security Actually Requires

The identity question matters. It’s not the whole problem.

Most enterprises built their agentic AI security around access controls. Who reaches which system, under which role? That work was real, and most of it holds.

The data moves anyway. Prompts, summaries, inter-agent messages, and RAG stores that a dozen downstream agents pull from. Your CRM permissions stayed at the CRM.

Context-based access control for AI sits at the inference layer. A billing support agent and a general inquiry agent can run the same prompt against the same system. One of them returns a subset. Which subset depends on who triggered it, what task they’re running, and what their role covers in that specific context.

Gartner predicts that over a third of enterprise applications will contain agentic AI by 2028, up from under one percent in 2024. The WEF’s breakdown of non-human identities tracks how fast API keys, service accounts, and agent tokens are accumulating across enterprise environments. Most of them don’t show up in any inventory.

Field-level audit trails are the gap most deployments haven’t closed. Not summary logs. Records of what specific data each agent accessed, in which workflow, and at what point in the session. Some healthcare and financial services teams won’t sign off on internal AI deployment without them.

Protecto’s Privacy Vault tokenizes sensitive data before it enters agent pipelines. It doesn’t reach prompts or inter-agent messages in raw form.

Conclusion

AI agent security isn’t a future problem. Agents are now running across healthcare systems, banking platforms, and enterprise SaaS stacks. The pipelines they run inside don’t respond to your DLP rules or your RBAC configuration.

Named organizations with mature security teams have already encountered these failure modes. The incidents are documented.

The enterprises that manage this well have built a data control layer inside the pipeline before something goes wrong. Identity governance tells you who accessed a system. Data governance tells you what the agent actually saw. Most organizations have only built the first one.

Start with your data control layer before your agent fleet grows past what you can audit manually.

Frequently Asked Questions

What is AI agent security?

Autonomous AI agents pull data from systems you secured, pass it between pipelines, write to memory, and act on instructions without a human reviewing each step. The practice of controlling what they can see and do during all of that is AI agent security. It isn’t the same problem as securing a traditional application. The data doesn’t stay where your controls are.

What is the difference between agentic AI security and regular AI security?

Regular AI security has a boundary. Block what goes in, filter what comes out. Agentic AI security doesn’t work that way. One instruction to an agent branches. API calls, memory writes, tasks handed to other agents. Something goes wrong in one of them. Three workflows later, someone is still trying to trace where it started.

Why doesn’t traditional RBAC work for AI agents?

RBAC stays at the application. An AI agent pulls data into its context, and those permissions don’t travel with prompts, summaries, or downstream agent inputs. The source-level controls stayed at the source. Context-based access control sits at the inference layer instead. What the agent sees depends on who triggered it, not just whether they authenticated.

What is prompt injection in the context of AI agents?

Someone embeds instructions inside a document, an email, or a retrieved web page. The AI agent reads it. It doesn’t separate the instructions from the content. In a multi-agent system, the instruction moves. It’s three agents deep before anyone reviews an output.

What should enterprises do first to secure AI agents?

Start with inventory. Every deployed agent, every tool it reaches, every data source it has touched. A customer support agent at one enterprise had clean access controls across every integration, documented and reviewed. Six weeks after go-live, a support query returned a field it had no business returning. The system-level controls were fine. The pipeline wasn’t.