Zero Trust AI Access (ZTAI) is a security framework that applies “never trust, always verify” principles to every interaction involving AI systems, including LLMs and AI agents, as well as the sensitive data they process.

Traditional zero trust was built to protect people accessing applications. ZTAI extends those same principles to a new category of actor: AI itself. Every prompt sent to a model, every document an agent reads, and every API call an AI pipeline makes must be authenticated, authorized, and continuously monitored. No interaction gets a free pass based on origin or location.

The urgency is not theoretical. According to the IBM Cost of a Data Breach Report 2025, based on research by Ponemon Institute across 600 organizations globally, 97% of organizations that reported an AI-related security incident lacked proper AI access controls. Enterprises are deploying AI faster than they are securing it, and the gap is widening.

For teams building AI pipelines that handle customer data, PHI, or financial records, understanding AI data privacy and compliance frameworks is the essential first step toward a ZTAI posture.

Why Does Traditional Zero Trust Break Down in AI Environments?

Traditional zero trust secures access based on identity and location. AI environments introduce a third dimension: context. An AI agent does not log in once and stop. It continuously queries data, chains tool calls, and generates outputs that contain sensitive information.

Standard Role-Based Access Control (RBAC) assigns permissions to a user’s role. That works well for structured databases and file shares. AI pipelines are different: they combine unstructured documents, live API calls, multi-agent workflows, and dynamically assembled context windows. An RBAC policy that grants a support agent access to “support tickets” has no visibility into what happens when the LLM combines that ticket with a contract clause, a salary figure, and a product roadmap in a single context window.

Three specific failure modes define where legacy access control breaks:

| Failure Mode | How It Happens | Why ZTAI Fixes It |

| Over-privileged AI agents | Agents inherit the permissions of the user who triggered them, not the minimum needed | Least-privilege enforcement per agent action |

| Context window data leakage | Sensitive data from multiple sources merges invisibly in the LLM context | Real-time content inspection and masking before inference |

| Prompt injection | Attacker-controlled input manipulates the model into bypassing access policies | Input validation and intent verification at every step |

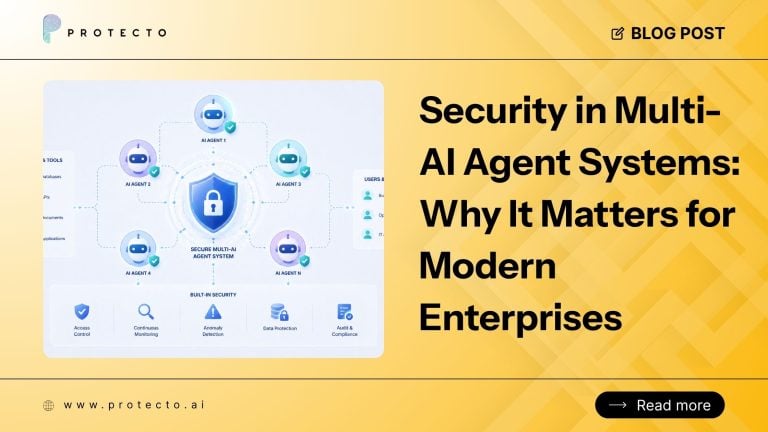

The problem compounds with multi-agent systems. When one AI agent delegates a task to another, the second agent carries no verified trust of its own. Without ZTAI security controls, a single compromised agent can move laterally across your entire data layer. Effective AI agent access control must treat every agent-to-agent communication as a new, unverified access request.

What Are the Core Principles of Zero Trust AI Access?

ZTAI applies five principles that together prevent any AI actor, whether a human-facing chatbot or an autonomous agent, from accessing more data than it needs, for longer than necessary, without continuous re-verification.

Continuous Identity Verification

Every entity interacting with an AI system, whether a user, agent, or service, must authenticate per request. A session token from 10 minutes ago is not sufficient proof of current legitimacy. Trust expires with every interaction.

Least-Privilege Access for AI Actors

An AI agent summarizing sales reports has no business accessing HR records. ZTAI policies restrict access to the minimum data required for a specific task, enforced at the data layer, not just the application layer.

Assume Breach

AI systems must be designed under the assumption that any model, agent, or pipeline can be compromised. Prompt injection, model inversion, and training data poisoning are real attack vectors. Controls must contain the blast radius of any single failure.

Real-Time Data Inspection

AI data flows through unstructured prompts and responses, not network packets that a perimeter tool can inspect. Zero trust for AI requires content-level detection and masking of PII and PHI before sensitive data reaches the model. This is where AI data leak prevention controls operate directly.

Immutable Audit Trails

Every data access, agent action, and model output must be logged with enough granularity to satisfy a compliance audit. Under India’s DPDP Act, the EU’s GDPR, and HIPAA, auditability is not optional. It is the difference between passing a regulatory review and facing one.

The NIST Special Publication 800-207, which defines Zero Trust Architecture, establishes that no trust is implicit based on network location. ZTAI applies that same logic across the full AI stack.

How Does ZTAI Differ from a Standard Zero-Trust Architecture?

Standard Zero Trust Architecture secures who accesses what. ZTAI secures who accesses what, how data is used during AI inference, and what the model produces on the other side.

The distinction matters operationally. A user accessing a database via a zero-trust proxy passes identity verification at the entry point. That is the end of the security interaction for standard ZTA. In an AI pipeline, the access event is ongoing. The model processes data, generates intermediate outputs, calls external tools, and passes results to other agents. Each of those steps represents a new trust decision that standard zero trust has no mechanism to evaluate.

ZTAI also introduces non-human identities as first-class security principals. Microsoft’s Zero Trust for AI reference architecture, published in March 2026, specifically identifies overprivileged agents as a primary risk vector in agentic systems. Agents that operate without scoped permissions can function as internal threats without any external attacker involved.

Standard zero-trust cannot inspect a prompt for PII before it leaves your network boundary. That requires a separate layer: context-aware masking and access control at the AI interface, which is exactly what Context-Based Access Control (CBAC) delivers within a ZTAI architecture.

What Does ZTAI Implementation Look Like in Practice?

Agentic AI security implementation requires controls at four distinct layers. Securing only one leaves the other three exposed.

Identity Layer

Define every entity that interacts with your AI system, including users, agents, tools, and data connectors, and assign each a verifiable identity. Integrate with your existing IAM platform and enforce per-request authorization rather than per-session. An agent that authenticated an hour ago must re-verify before its next action.

Data Layer

Scan all data before it enters an AI context window. Detect and mask PII, PHI, financial identifiers, and confidential business data. Use format-preserving tokenization so the model receives semantically intact input without seeing raw sensitive values. This approach maintains AI reasoning quality while preventing exposure before inference.

Model Access Layer

Apply least-privilege controls to model access itself. A junior analyst’s AI assistant should not query the same data sources as a senior compliance officer’s agent. Policies must be dynamic, adapting to the user, the task, and the sensitivity of the data being requested, not fixed to a static role assignment made months ago.

Output Layer

Inspect and filter model outputs before they reach the end user or a downstream agent. An LLM can inadvertently reconstruct sensitive data from masked inputs through inference. Output filtering catches this before information exits your control boundary entirely.

For organizations operating under India’s DPDP Act or similar data sovereignty regulations, a complete ZTAI posture also requires that raw sensitive data never leave the jurisdiction. GPTGuard addresses this directly by masking PII before prompts reach external LLMs, then restoring original values when the response returns, so no raw personal data crosses a regulatory border.

Conclusion

ZTAI is not a future-state aspiration. It is the security architecture that enterprises need right now to deploy AI without creating unchecked liability. The IBM 2025 breach data makes the cost of inaction concrete: organizations with high levels of shadow AI paid an average of $670,000 more per breach than those with low or no shadow AI, and 97% of organizations hit by AI-related incidents had no AI data governance controls in place.

The teams building agentic pipelines, deploying LLMs for customer-facing workflows, or integrating AI into regulated environments cannot rely on access models designed for static systems and human users. ZTAI, enforced at the identity, data, model, and output layers, is what makes AI deployable at scale without compromising the data it touches. See how organizations in banking, healthcare, and enterprise SaaS have already built this posture in Protecto’s customer case studies.

Frequently Asked Questions

Who actually needs ZTAI?

Any organization running AI on sensitive data needs ZTAI: healthcare teams processing PHI, financial services firms running LLM analytics on customer records, and SaaS platforms with multi-tenant AI pipelines. Organizations whose AI systems only process fully synthetic or publicly available data face a lower risk threshold. The practical trigger is this: if your AI touches data you would not want exposed in a breach, ZTAI controls are not optional.

Does ZTAI replace standard zero trust, or extend it?

It extends it. A standard zero-trust model handles identity and network access at the perimeter. ZTAI adds the AI-specific enforcement layer on top: prompt inspection, context window monitoring, agent-to-agent trust boundaries, and output filtering. Organizations that have already invested in zero trust architecture do not start over. They add ZTAI controls at the data and inference layers where standard ZTA has no visibility.

What is the real cost of skipping AI access controls?

According to the IBM 2025 Cost of a Data Breach Report, organizations with high levels of shadow AI paid $670,000 more per breach than those with low or no shadow AI. Beyond direct costs, 60% of AI-related security incidents resulted in compromised data, and 31% caused operational disruption. For enterprises in healthcare or financial services, compliance penalties compound the financial exposure further.

Which regulations make ZTAI non-negotiable for enterprises?

Skipping ZTAI controls is already costing organizations money. According to the IBM 2025 Cost of a Data Breach Report, 32% of breached organizations paid regulatory fines, and 48% of those fines exceeded $100,000. India’s DPDP Act, the EU’s GDPR, and HIPAA all impose data minimization, access control, and audit requirements that uncontrolled AI pipelines routinely violate. The practical consequence is not just a fine. Organizations face operational restrictions, reputational damage, and in some jurisdictions, personal liability for executives overseeing non-compliant AI deployments.

How is CBAC different from ZTAI?

ZTAI is the security philosophy. Context-Based Access Control (CBAC) is one mechanism for enforcing it. RBAC asks, “What role does this user hold?” CBAC asks “what is this specific data, who is requesting it, and in what context?” A ZTAI architecture without CBAC can enforce identity controls, but cannot govern what an AI agent does with data once access is granted. CBAC fills that gap at the content and inference layer.