Today, more and more modern enterprises are opting for a multi-AI agent. Multi-AI agents can automate tasks, connect tools, and enable faster decision-making across different teams. However, when the adoption of these agents grows, so will the risks. Security in multi-AI agent systems is no longer a technical afterthought. It is a core business requirement.

A key insight can be taken from an IBM report, which states that 13% of companies have experienced breaches that have majorly involved AI models or applications. This indeed creates a clear challenge. With the growing adoption of AI, the need for strong security is also on the rise.

This is why security in multi-AI agent systems must be built from day one. In this guide, we break down the risks, explain what matters most, and show how to implement strong security features for multi-llm agent systems in regulated industries.

What Are Multi-AI Agent Systems?

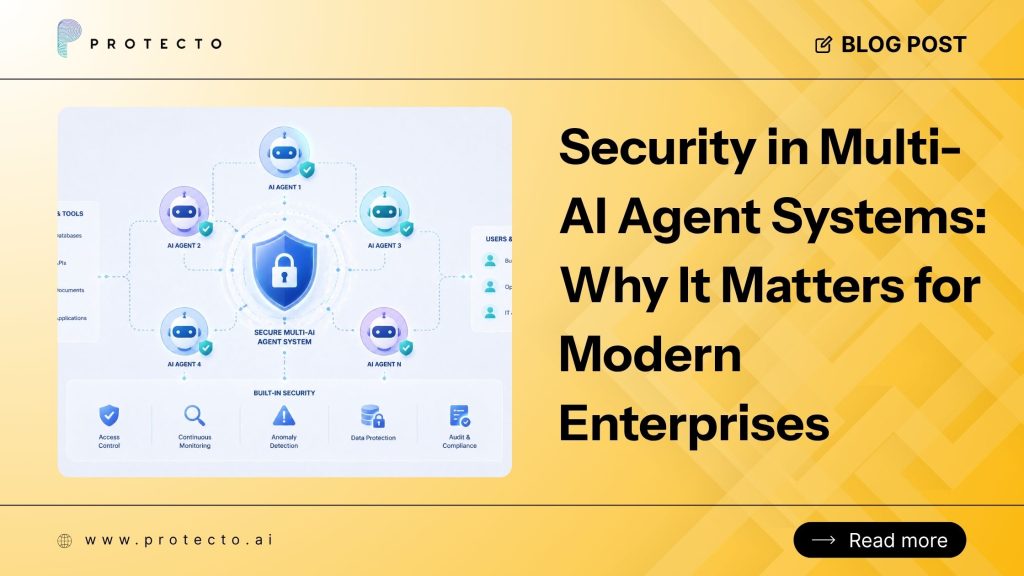

Multi-AI agent systems essentially are environments in which multiple AI agents collaborate to complete tasks. Each agent has a specific role and communicates with others to achieve a shared goal.

Instead of one model doing everything, tasks are aptly distributed. This improves efficiency but also adds complexity. These systems typically include:

- Agents that retrieve data

- Agents that process or analyze information

- Agents that take action or generate outputs

- Integrations with APIs, tools, and enterprise systems

Because these agents interact constantly, even a small vulnerability can affect the entire system. This is where security in Multi-AI agent systems becomes critical.

Why Security in Multi-AI Agent Systems Matters?

The risk amplifies as the systems become more connected. It is now established that security is not simply about protecting data.

Let us look at some reasons why security in Multi-AI agent systems becomes necessary:

1. Expanded Attack Surface

Every new agent adds another entry point into your system. These entry points can be exploited if not secured properly.

- More APIs and endpoints

- More internal communications

- More permission layers

Did you know that 70% of breaches involve lateral movement, that too right inside the systems? It reflects that attackers can move from one weak point to another with ease once they get access. Therefore, strong security in Multi-agent AI systems is a must-have, as a compromised agent can easily expose sensitive data.

2. Sensitive Data Exposure

Multi-agent systems often handle critical enterprise data. This includes different data like customer records, financial details, and proprietary information. If not protected in the correct way, this data can leak through:

- Prompts and responses

- Logs and stored outputs

- API integrations

In regulated sectors, even a small leak can lead to compliance violations. This is why security features for multi-LLM agent systems in regulated industries must focus heavily on data protection.

3. Autonomous Decision Risks

AI agents are known to act without constant human input. Sometimes this powerful benefit can also prove to be risky. For example, if an agent is manipulated, it can trigger incorrect workflows, send wrong communication, or make harmful updates.

This makes governance essential. Strong security in Multi-AI agent systems ensures that automation does not necessarily turn into uncontrolled risk.

4. Compliance and Regulatory Pressure

Industries such as finance, healthcare, or insurance operate under strict regulatory authorities. Hence, AI systems need to mandatorily comply with these rules. Some very common regulatory frameworks include:

- GDPR for data privacy

- HIPAA for healthcare data

- SOC 2 for security controls

About 60% organisations see compliance as a major AI risk. Hence, without proper security features for multi-LLM agent systems in regulated industries, businesses risk fines and reputational damage.

Key Security Features for Regulated Industries

Regulated industries such as healthcare or finance have to comply with laws such as the GDPR, HIPAA, and India’s DPDP Act. These laws say that you cannot just send customer names or credit card numbers to a public AI model.

To stay safe, you need specific security features for multi-LLM agent systems in regulated industries.

1. Context-Aware Masking

One of the best ways to protect data is Data Masking. This means replacing a real name with a fake one before the AI sees it. However, if you mask too much, the AI gets confused. Modern security tools use “context-aware” masking.

This keeps the sentence’s actual meaning the same, so the AI stays smart while the private data in reality stays hidden.

2. Context-Based Access Control (CBAC)

In a multi-agent system, not every agent needs to know everything. For example, an agent who writes emails should not have access to a customer’s full medical history. Context-Based Access Control (CBAC) ensures that each agent only gets the data it absolutely needs for its specific task.

3. Real-Time Tokenization

Tokenization turns sensitive data into a “token” or a code. If a hacker steals a token, they cannot use it. The actual data is stored in a secure Privacy Vault. Only authorized systems can turn the token back into real data.

4. Semantic Filtering

Sometimes agents can be “tricked” into giving away secrets through a “prompt injection” attack. Semantic filtering looks at what the agent is about to say. If the response contains toxic content or sensitive data, the filter stops it from reaching the user.

How to Build a Secure Multi-Agent Framework?

A structured approach makes security easier to implement and manage. Follow these steps to strengthen security in Multi-AI agent systems.

Step 1: Map Your System

Start by understanding your system fully. Identify all agents, data flows, and integrations. First, list all agents and their roles. Next, map data movement, then identify external connections.

Step 2: Classify Data

Not all data needs the same level of protection. Classify it by sensitivity: public, internal, or highly sensitive. Then, based on the classification, apply controls.

Step 3: Implement Access Controls

Define who and what can access each part of the system. Here is what needs to be done:

- Limit agent permissions

- Avoid over-access

- Regularly review roles

This reduces unnecessary exposure.

Step 4: Secure Communication Channels

It is necessary to ensure that all communication is protected. Organizations must:

- Use encrypted connections

- Authenticate APIs

- Validate integrations

Always remember that secure communication is important when it comes to safe operations.

Step 5: Monitor and Detect Threats

Continuous monitoring is one of the most underrated yet most important steps when it comes to identifying threats before a disaster strikes. Companies can opt to use real-time alerts, detect anomalies, and respond to threats as quickly as possible. This keeps systems resilient.

Step 6: Test and Improve

Security is always ongoing, and testing on a regular basis can significantly help in the timely identification of gaps. Organizations can greatly benefit from conducting audits, performing penetration testing, and updating policies at regular intervals.

Strong security in Multi-agent AI systems requires constant improvement.

Common Mistakes to Avoid that Organizations Must Avoid

Many organizations tend to underestimate AI-related risks, especially in complex environments. When these gaps are ignored, it can lead to serious security failures. Even a tiny oversight can quickly scale into a major incident.

- Ignoring prompt injection attacks can manipulate agent behavior and outputs

- Skipping monitoring and logging can make the detection process harder.

- Giving excessive permissions increases the risk of unauthorized access

- Not paying attention to internal threats from misconfigured systems or users

- Treating security as an afterthought instead of a priority

Addressing these issues early helps reduce vulnerabilities, improve system control, and ensure stronger security features for multi-LLM agent systems in regulated industries over time.

Conclusion

Multi-AI agent systems offer speed, scale, and efficiency. But they also pose potential risks if not properly secured. It is therefore necessary to have strong security features for multi-LLM agent systems in regulated industries, so that businesses can protect their data, ensure compliance, and build trust.

The solution is simple. Security must be built into the system from the onset and not added later as an afterthought. If you get this aspect right, your AI systems will not only be powerful but also ethical, safe, reliable, and ready for the future.

Frequently Asked Questions

What does security in Multi-agent AI systems mean?

Security in Multi-AI-agent systems essentially means protecting interconnected AI agents, data flows, and integrations from threats. Some threats include unauthorized access, prompt injection, as well as data leakage. Security in the multi-AI agent ensures safe, reliable, and compliant operations across enterprise environments.

What are the Common Risks in Multi-agent AI systems?

Some common risks in Multi-AI agents include prompt injection attacks, data leakage, unauthorized access, insecure APIs, and agent manipulation. When proper security is not maintained in multi-agent AI systems, these risks can disrupt entire enterprise workflows, expose sensitive data, and compromise decision-making processes.

How can organizations improve security in Multi-AI agent systems?

Organizations now have to improve security by implementing access controls, encrypting data, monitoring agent activity, validating inputs, and using secure APIs, ensuring strong protection across all layers of multi-agent AI systems and reducing potential vulnerabilities.

Does adding security slow down the AI agents?

Yes, only if you use legacy tools. However, modern security solutions are built to be AI-native. Such solutions use real-time tokenization and context-preserving masking, which allows the agents to work at full speed without losing accuracy or reasoning power.