Synthetic data for AI development has become the default shortcut for most engineering teams. It’s fast, sidesteps privacy headaches, and lets you move without touching production. I get why teams default to it.

But there’s a problem: synthetic data for AI routinely breaks down the moment your system hits real-world enterprise data. The system demos great. It passes every internal test. Then it lands in production and falls apart in ways you didn’t see coming.

This post breaks down exactly why that happens — and what to do instead.

Traditional software follows rules. You check inputs, check outputs, file a bug when something breaks. Synthetic data has been fine for testing that kind of system for decades — because the system itself is rule-bound.

AI is different. AI gets deployed into situations where the rules aren’t clear and context is everything. The edge cases aren’t exceptions — they’re the whole point.

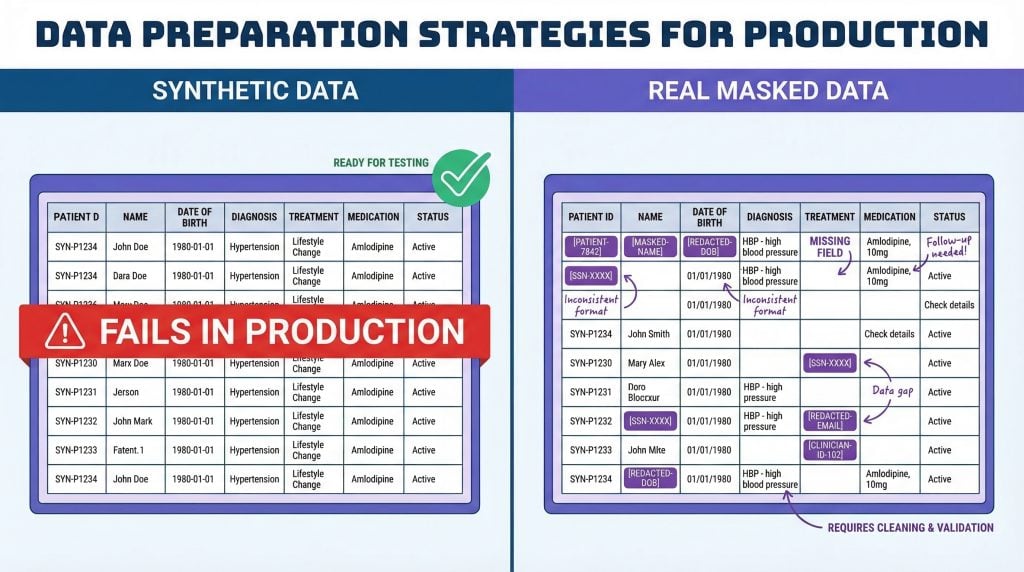

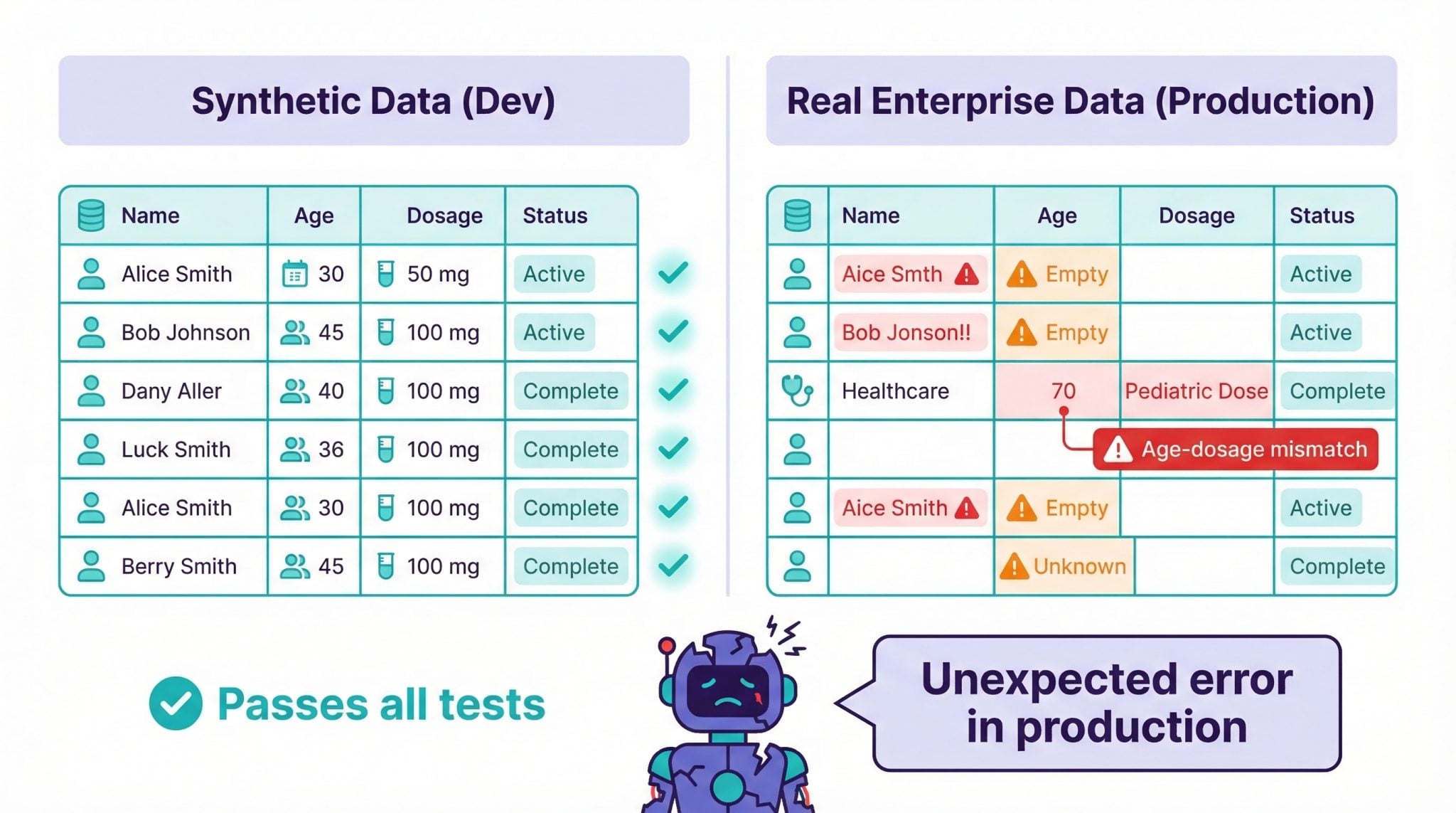

Most teams building AI right now face a quiet choice: use synthetic data for AI testing, or use real enterprise data that’s been properly masked. Synthetic wins on convenience. But I keep seeing the same thing play out at enterprises shipping AI into real workflows. The dev environment is clean. The demos are smooth. Then production hits and the model fails on the exact cases it was supposed to handle.

That’s not a fluke. It’s structural. Here’s why.

Why Synthetic Data for AI Misses Real-World Messiness

Enterprise data is full of garbage. Documents have typos. Fields are half-filled. Formats shift between departments, between years, between whoever was entering records on a random Tuesday afternoon.

Synthetic data generators smooth all of that away. They produce statistically plausible records that look fine row by row — but completely miss the texture that makes real data hard to work with.

Healthcare is the clearest example. A synthetic patient dataset gives you clean records with reasonable values in every field. Real patient data has age-dosage mismatches, incomplete diagnostic codes, and free-text notes that directly contradict the structured fields. Those aren’t bugs. That’s what your AI will actually face when it goes live. If your AI has never seen any of that messiness, you haven’t tested it — you’ve tested a simulation of it.

The same is true in financial services. Loan records with partial histories. Contracts where the clause in section 4 quietly overrides section 7. Invoices where the line items don’t add up to the total. Synthetic AI test data doesn’t surface any of this, because it was generated to be internally consistent. Real data wasn’t.

The Broken Relationship Problem in AI Test Data

This is the one that burns engineering teams the hardest, and it’s also the least obvious.

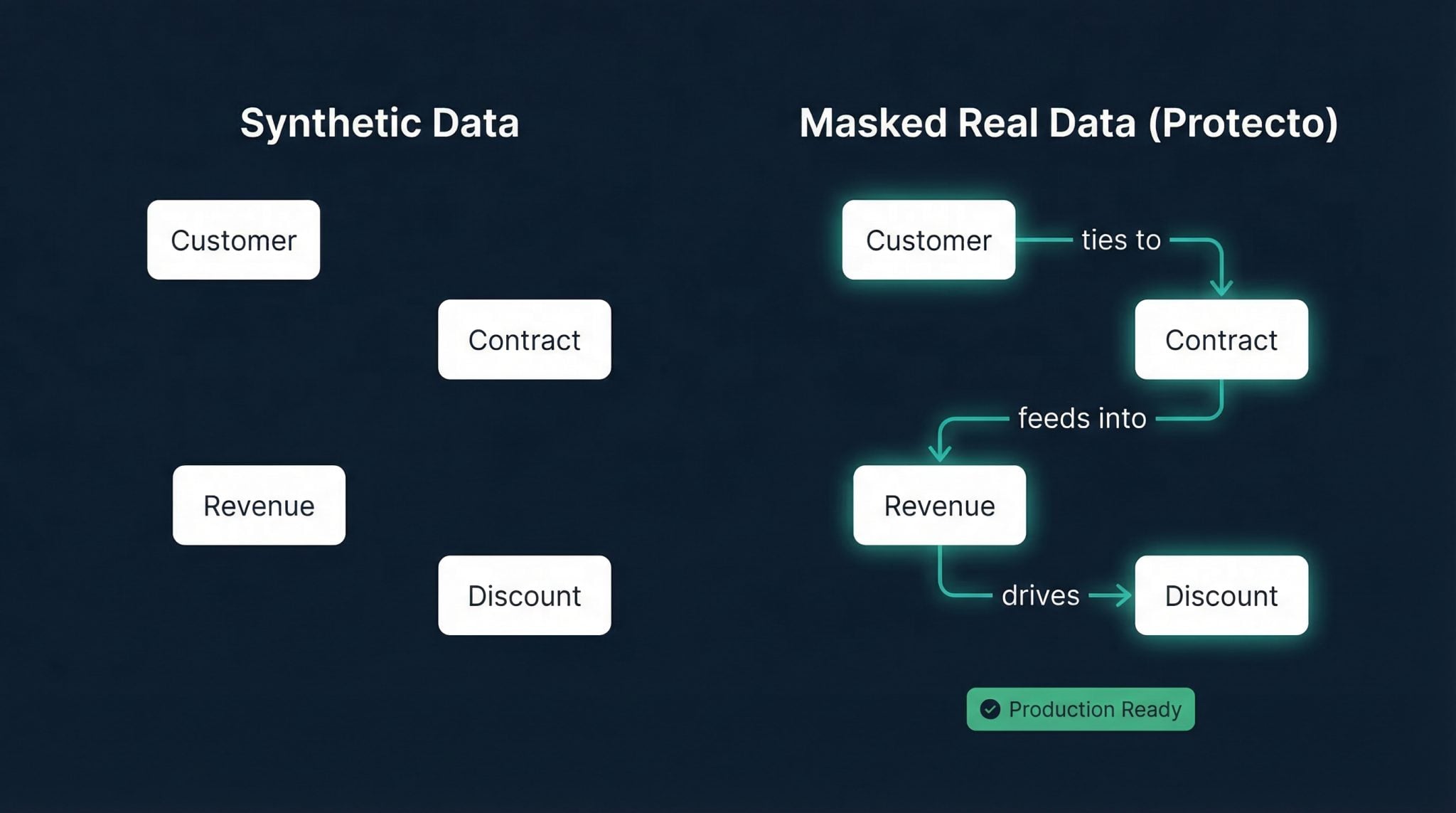

Enterprise data is deeply connected. Customer revenue ties to contract size. Contract size ties to discount rules. Discount rules feed into lifetime value calculations. Pull on one thread and everything shifts.

Synthetic generators typically produce values independently, or with simplified statistical models at best. Check one record at a time and it looks fine. But the relationships between fields quietly fall apart. You end up with discount percentages that violate pricing policies. Revenue figures that can’t coexist with the contract terms. Lifetime values that exist nowhere in reality.

When an AI agent reasons over this kind of AI test data, it inherits those broken relationships. The decisions it produces are wrong in ways you can’t easily trace back — because every individual record looked fine. The bug isn’t in any one row. It’s in the space between rows, and synthetic data generators have no reliable way to preserve that.

For RAG systems this is especially damaging. When a retrieved document has internally inconsistent figures, your model doesn’t know that. It reasons over the inconsistency as if it were ground truth.

Edge Cases Are Where AI Has to Perform

In traditional software, a missed edge case gets you a bug ticket. In AI, a missed edge case means the system fails in the exact situation where it was supposed to help.

A contract written under unusual legal terms. A financial transaction sitting right at a compliance threshold. A healthcare case with overlapping conditions that don’t fit any standard category.

These situations are rare in any dataset. They’re also exactly where your AI has to perform — because those are the hard cases that humans escalate to automated systems.

Synthetic data optimizes for the average. Rare scenarios get dropped or simplified because they’re statistically uncommon. You end up with a system that passes every test you throw at it, then fails on the cases that actually matter.

The deeper problem: you won’t always know what edge cases you’re missing. Synthetic AI test data doesn’t show you the gaps — it hides them behind a clean pass/fail on generated records.

RAG and Agents Make Synthetic Data Worse

When AI systems use enterprise data for live reasoning, the stakes go up fast.

In RAG pipelines, retrieved documents become the context for model answers. With agent architectures, enterprise data drives planning and automated actions — scheduling, drafting, executing workflows without a human in the loop.

If the underlying data is synthetic, your reasoning environment is artificially clean. Your agent looks reliable in dev because it’s never dealt with a messy contract, an ambiguous invoice, or a compliance document with conflicting clauses. Put it in front of real enterprise data and it stumbles in ways you didn’t predict. Debugging that is expensive. Explaining it to stakeholders is worse.

There’s also a compounding effect. Agents that make decisions based on bad synthetic AI test data can take those errors further downstream. An automated compliance check that passes on synthetic data may flag (or miss) something entirely different when real PII-laden records enter the pipeline.

The Compliance and PII Masking Liability You’re Ignoring

Here’s something that doesn’t get discussed enough in engineering circles.

In regulated industries — healthcare, financial services, insurance — the quality of your test data isn’t just an engineering concern. It’s a legal one.

Say you trained and validated your AI on synthetic data that didn’t capture real-world complexity. That system then makes a decision affecting a patient or a customer. You now have a problem that goes well beyond a production incident. Regulators are starting to ask how AI systems were validated before deployment. “We tested on synthetic data” is a weak answer when the system missed something that real data would have caught — and when there’s documented harm.

PII masking is where this becomes concrete. If your test environment uses real data and that data isn’t properly masked, you’re already in violation territory in most regulated environments. But if you use synthetic data to avoid that problem, you’re trading a compliance risk for a quality risk that shows up in production.

Neither outcome is good. The answer isn’t to pick the lesser evil — it’s to handle PII masking correctly so you can use real data safely.

Synthetic Data vs Real Data for AI: A Side-by-Side Breakdown

Here’s how synthetic data vs real data actually compares across the dimensions that matter for AI development:

| Dimension | Synthetic Data | Masked Real Data |

|---|---|---|

| Edge case coverage | Poor — rare scenarios get dropped or smoothed | Strong — real data includes the outliers that break AI |

| Data relationship integrity | Weak — fields generated independently | Preserved — relationships between fields remain intact |

| Privacy risk | None | Low — PII replaced with consistent tokens |

| Regulatory acceptance | Unclear — often insufficient for validation in regulated industries | Strong — real data provenance is documented and auditable |

| Messiness / noise | Absent — synthetic data is artificially clean | Present — typos, gaps, format inconsistencies included |

| AI agent reliability in production | Low — agent behavior doesn’t transfer | High — model trained on actual enterprise data patterns |

| Time to generate | Fast | Requires masking pipeline setup, then fast |

| Suitable for early experiments | Yes | Overkill for quick prototypes |

| Suitable for production validation | No | Yes |

The honest summary: synthetic data vs real data isn’t a fair fight once you’re past the prototype stage. Synthetic is fine for early exploration. For anything going into production — especially in agentic or RAG workflows — you need data that started in the real world.

Data Masking for AI: Use Real Data Without the Privacy Risk

The obvious objection here is real production data carries privacy and security risk. You can’t hand it to a dev team or pipe it into a model. That’s fair — and it’s a real constraint, not an excuse.

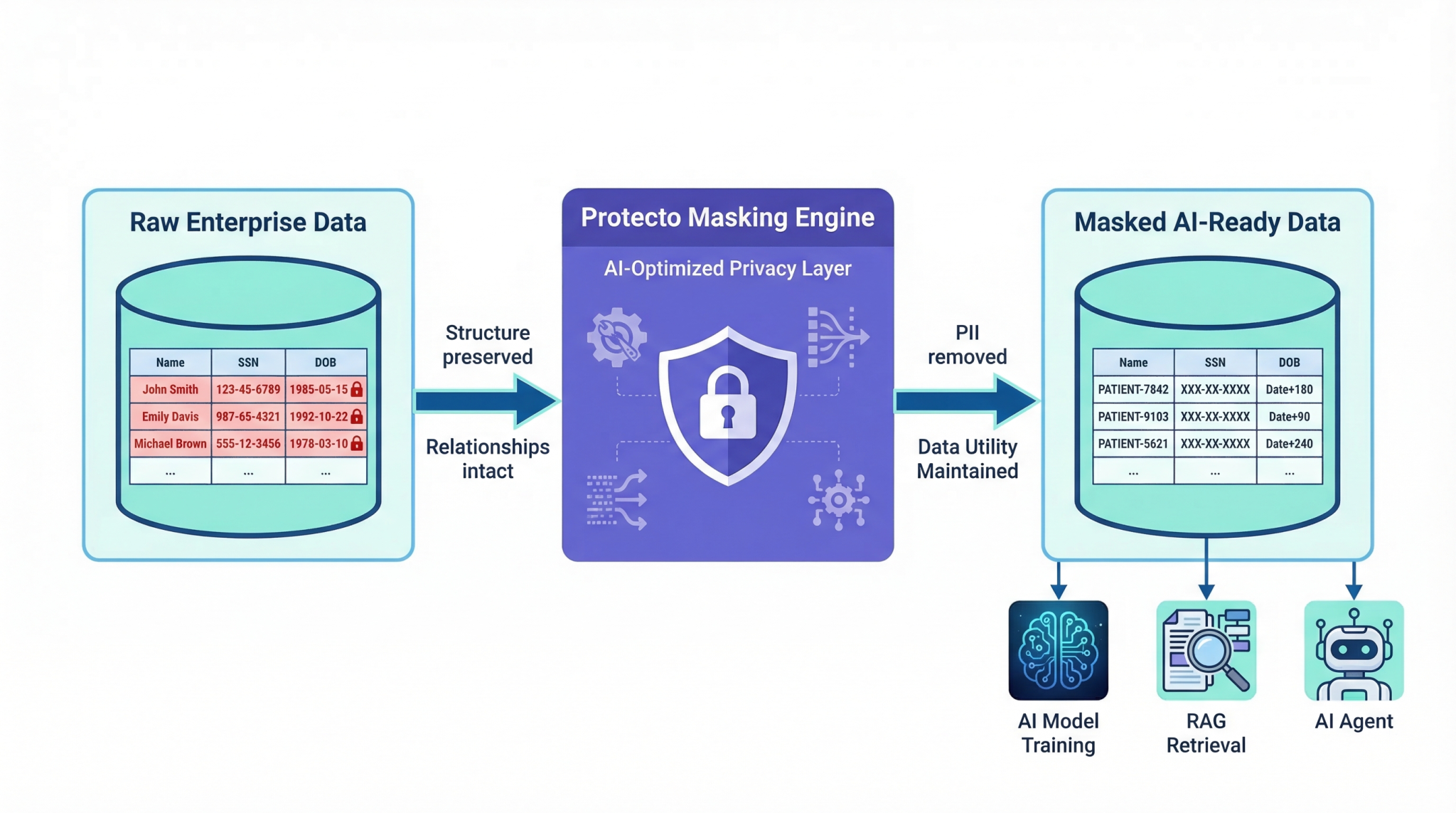

But data masking for AI solves exactly this problem. The idea is straightforward: replace sensitive fields with consistent tokens that preserve the structure and relationships the AI depends on, without exposing the underlying PII.

Here’s what that looks like in practice. A patient record might have a name, a date of birth, an SSN, and a diagnostic history. After masking, the name becomes a consistent token — “Patient-7842” — and that same token appears wherever that patient’s name would appear across every related document. The SSN is replaced. The date of birth is shifted by a fixed offset. The diagnostic history is untouched because it’s not PII.

What the AI gets is a record that behaves exactly like the original — the same relationships, the same field distributions, the same edge cases — without any real personal data in the system. The model trains on something that represents the real world accurately.

This is what Protecto handles. We mask and tokenize sensitive information while keeping the structural integrity that AI depends on. Names, identifiers, financial values get replaced with consistent tokens that behave the same way across documents, databases, and workflows — so your AI test data is safe to use in any environment, without sacrificing the messiness and complexity that makes it useful.

Effective data masking for AI should preserve:

- Field relationships — Revenue tied to contract size, diagnostic codes tied to patient history

- Statistical distributions — Outliers and edge cases present in the original data

- Cross-document consistency — The same entity is the same token everywhere

- Format fidelity — Masked dates still look like dates; masked account numbers still look like account numbers

Without all four, you end up with masked data that AI models treat differently from real data — which mostly defeats the point.

AI Test Data Best Practices for MLOps Pipelines

If you’re building out an AI testing strategy, here’s what actually works:

- Use synthetic data for early development only. When you’re exploring model architecture, testing pipeline plumbing, or building internal tooling, synthetic data is fine. The risk is low and the speed is high.

- Switch to masked real data before any production validation. Once you’re evaluating a model for deployment, your AI test data needs to come from real enterprise sources. That’s the only way to surface the edge cases, broken relationships, and noise that synthetic data can’t replicate.

- Build a data masking pipeline early. Don’t treat masking as a one-off task. Build it into your MLOps workflow so masked data is always available on demand. This prevents teams from reverting to synthetic data under time pressure.

- Track data provenance. Document where your training and test data came from, what masking was applied, and when. Regulators are starting to ask for this. Having it ready is table stakes in healthcare and financial services.

- Test on PII masking edge cases specifically. Names that appear in unusual contexts (email signatures, embedded in contracts, referenced in notes) should be masked consistently. Test for this explicitly — it’s where most masking implementations break down.

- Audit model behavior on real vs. synthetic data. If you’ve been using synthetic AI test data and you’re introducing masked real data, run parallel evaluations. The performance difference will tell you how much your synthetic baseline was misleading you.

The Bottom Line

Synthetic data for AI is a reasonable starting point. It’s not a finishing point.

The gap between synthetic AI test data and masked real data isn’t theoretical — it shows up in production, in the cases that actually matter, in the environments where AI decisions have real consequences. Engineering teams that ship AI into enterprise workflows without making that transition are shipping something that hasn’t been tested against the world it’s going to live in.

You don’t have to choose between synthetic data that lacks depth and raw data that carries risk. Data masking for AI lets you work with real-world data safely — messiness, edge cases, PII, and all — so your AI is tested against the environment it’ll actually operate in.

There’s no shortcut around that. The question is just when you make the switch.