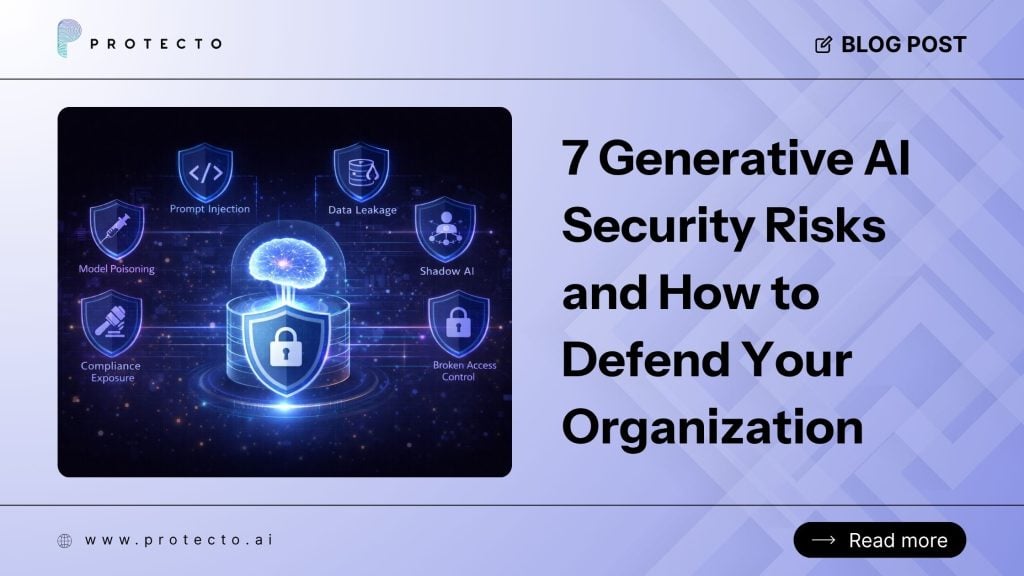

Generative AI creates new attack surfaces that traditional security tools were not designed to address. The biggest generative AI security risks include prompt injection, data leakage, shadow AI, compliance exposure, model poisoning, insecure RAG pipelines, and broken access control. Each one requires a specific defense, not a generic firewall or DLP rule.

Key Takeaways

- 97% of companies are already reporting GenAI security issues and breaches; this is not a future problem.

- Traditional DLP, RBAC, and firewalls were not designed for how AI moves and transforms data.

- Prompt injection and data leakage are the two most immediate risks for most security teams.

- Access control must happen at inference time, not just at the data source.

- Identifying and mitigating the security risks of generative AI starts with understanding where your data actually goes inside an AI pipeline.

- Purpose-built AI security controls are faster and more accurate than building in-house.

Why Generative AI Security Is a Different Problem

Most enterprise security stacks were built around a simple idea: draw a perimeter, control who crosses it.

Generative AI breaks that model. It does not sit neatly inside your perimeter. It reads data from multiple sources, rewrites it into prompts, passes it through third-party APIs, and generates outputs that none of your existing rules can inspect properly.

Traditional DLP fails at inference. It may scan two separate lists, one of names and one of medical conditions, and find them harmless in isolation. But an AI can rephrase the document to explicitly link the two, creating a HIPAA violation that a pattern-based filter cannot detect.

That is the core problem. The data changes form. The sensitivity does not.

Here are the seven generative AI security risks your team needs to understand right now.

-

Prompt Injection

Prompt injection is one of the most well-documented security risks of generative AI, and one of the least understood by traditional security teams.

It occurs when a malicious actor embeds instructions within content that an AI model processes. The model follows those instructions as if they came from a trusted source. The result can range from data exfiltration to complete loss of agent control.

This is not theoretical. In mid-2025, the EchoLeak vulnerability demonstrated a zero-click prompt injection against Microsoft 365 Copilot, enabling enterprise data exfiltration without any user interaction.

How to defend against it: Inspect every prompt before it reaches the model. Use an intermediary layer that detects and strips injected instructions. For agentic workflows, limit what actions an agent can take, even if its instructions are compromised. Protecto’s GPTGuard for generative AI pipelines does exactly this, sitting between your data and the model to filter harmful or manipulated inputs before they cause damage.

-

Sensitive Data Leakage into AI Pipelines

This is the most common generative AI security risk in today’s production environments.

When employees or systems feed AI models with real customer data, medical records, financial information, or internal documents, that data can end up in model outputs, logs, third-party API calls, or fine-tuning datasets.

39% of organizations have already experienced an AI-related near-miss involving unintended data exposure. Most teams still lack visibility into what data is actually flowing through their AI pipelines.

How to defend against it: Mask or tokenize sensitive data before it enters the AI layer. The key is doing this without sacrificing model accuracy, which requires context-preserving tokenization rather than simple redaction. Protecto’s AI data privacy vault detects and masks PII and PHI in real time while preserving semantic meaning, so AI outputs remain useful.

A leading SaaS company used this approach to process 13 million texts daily for AI training, achieving a 90% reduction in data masking costs with no loss in model performance. Read the full case study here.

-

Shadow AI

Shadow AI refers to AI tools deployed by employees or business units without IT or security involvement.

44% of organizations that use AI struggle with business units deploying AI solutions without involving security teams. An equal share report unauthorized use of generative AI by employees.

The security risk here is straightforward. When someone pastes a confidential contract or a customer record into an unauthorized AI tool, you have no visibility, no audit trail, and no control over where that data goes.

How to defend against it: Build a sensitive data discovery process for your data lakes and pipelines so you know what data exists and where it is moving. Pair that with policy enforcement that works at the prompt level, not just at the network edge.

-

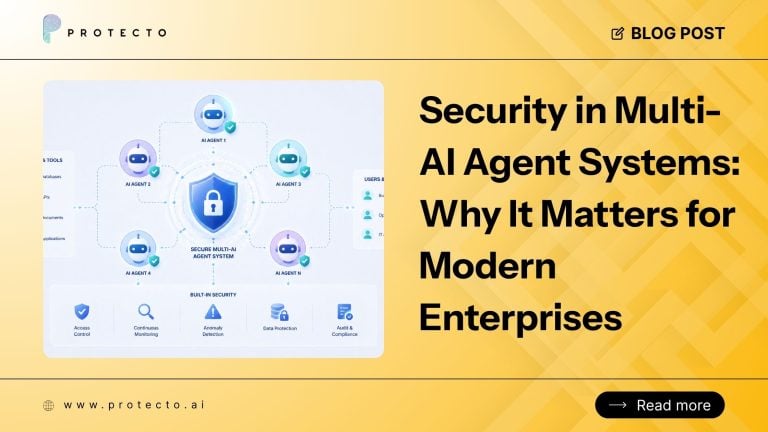

Broken Access Control for AI Agents

Traditional role-based access control was built for humans using apps. It was not built for AI agents that chain actions, call APIs, and access data dynamically across systems.

Only 7% of organizations have reached advanced AI governance maturity with real-time policy enforcement. That means 93% are running AI with access controls that were not designed for it.

The specific risk: an AI agent may be granted broad access to complete a task, but nothing stops it from reading, summarizing, or exfiltrating data it was never intended to touch.

How to defend against it: Move from static RBAC to context-aware access control that makes decisions at inference time, based on who is asking, what they are doing, and why. Protecto’s context-based access control for AI agents enforces these decisions dynamically, so a sales agent cannot access support data, and an analyst only sees anonymized aggregates.

A Fortune 100 enterprise used this approach to enforce zero-trust data policies across multi-agent AI workflows for over 50,000 users, without adding latency or breaking AI reasoning. Read the full case study here.

-

Compliance Exposure Across Borders

By 2027, more than 40% of AI-related data breaches will be caused by improper cross-border use of generative AI, according to Gartner. The reason is simple. Most AI tools are hosted in centralized cloud environments that may sit outside the data jurisdiction your customers or regulators require.

GDPR, HIPAA, PDPL, and DPDP all assume you know where data lives and how it is used. Generative AI reads, transforms, and moves data across systems in seconds. Your audit trail goes dark.

This is one of the most underestimated security risks posed by generative AI in regulated industries such as banking, healthcare, and government.

How to defend against it: Require data sovereignty controls for every AI deployment. That means knowing exactly where data is processed, proving it, and having the option to run models on-premises or within a private VPC. A leading bank in the Middle East used Protecto to deploy Gemini in compliance with strict PDPL laws, achieving 100% compliance with zero data egress to the public cloud in just three weeks. Read the full AI security case study for financial services.

-

Insecure RAG Pipelines

Retrieval-Augmented Generation (RAG) is now the standard way enterprises connect AI models to internal knowledge bases. But most RAG implementations have a serious blind spot: they do not enforce access control at the retrieval layer.

If a low-privilege user queries an AI assistant powered by a RAG pipeline, there is often nothing stopping that pipeline from surfacing documents the user was never supposed to see. The model retrieves, summarizes, and presents the information without checking permissions.

This is a growing and specific security risk of generative AI that many security teams are not yet focused on.

How to defend against it: Apply masking and access policies at the point of data retrieval, not just at the model output. Protecto’s secure RAG pipeline solution ensures that what is retrieved matches what the requesting user is actually permitted to see, and that this is enforced in real time.

-

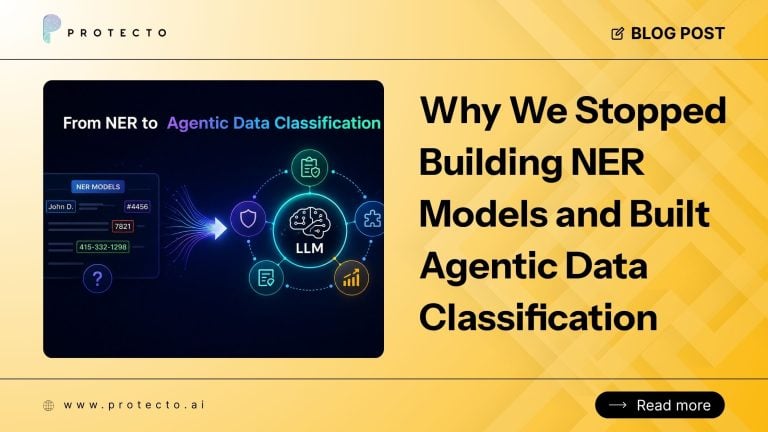

Model Poisoning and Training Data Risks

If your organization is fine-tuning models on internal data or using AI outputs to feed back into training pipelines, you have a model poisoning exposure.

Poisoning occurs when malicious or sensitive data is introduced into a training dataset, causing the model to behave in harmful or biased ways or to leak information embedded during training. This is one of the harder generative AI security risks to detect because the damage is baked into the model itself rather than a single transaction.

The OWASP GenAI Data Security Risks and Mitigations 2026 guide identifies training data security as one of the most critical and underaddressed areas in enterprise AI deployments.

How to defend against it: Scan and de-identify training data before it enters any fine-tuning process. Use AI data pipeline security controls to detect PII, PHI, and confidential business data in unstructured text before it is ingested. This also protects against inadvertent exposure of intellectual property when models are trained on proprietary documents.

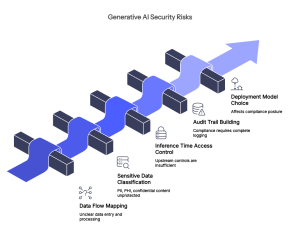

Identifying and Mitigating the Security Risks of Generative AI: Where to Start

Identifying and mitigating the security risks of generative AI does not require replacing your entire security stack. It requires adding a purpose-built layer that understands how AI actually handles data.

Here is a practical starting point for security teams:

Step 1: Map your AI data flows. Know what data enters your AI systems, from where, and what happens to it. Most organizations cannot answer this question yet.

Step 2: Classify sensitive data at the source. Before any data touches an AI model, it should be scanned for PII, PHI, and confidential content. Protecto automatically detects 200+ sensitive data types across prompts, documents, and outputs.

Step 3: Enforce access at inference time. Do not rely solely on upstream controls. Policies need to apply when an AI agent or model requests data.

Step 4: Build your audit trail. Compliance is not possible without a complete log of who accessed what, when, and why for every AI interaction.

Step 5: Choose between SaaS, private VPC, or on-prem. Your deployment model affects your compliance posture. Know which one your regulations require before you ship AI to production.

FAQ

What are the most common generative AI security risks in 2026?

The most common are prompt injection, sensitive data leakage, shadow AI, broken access control for agents, and compliance exposure from cross-border data processing. Most organizations are dealing with several of these at the same time.

Why do traditional DLP tools fail with generative AI?

Traditional DLP scans for known patterns in fixed data. Generative AI transforms data dynamically, rephrasing, summarizing, and translating it in ways that pattern-based tools cannot follow. A DLP rule looking for “patient name” will miss it when an AI model rewrites it into a clinical summary.

What is the difference between RBAC and CBAC for AI security?

RBAC controls who can access a data source. CBAC controls what data an AI agent can see at the moment it asks, based on role, context, and purpose. For agentic AI, RBAC alone is not enough. Learn more about AI agent access control and how CBAC works.

How does prompt injection work, and can it be prevented?

Prompt injection embeds malicious instructions into content that an AI model processes, tricking the model into following attacker-controlled commands. It can be significantly reduced with prompt inspection layers, strict output validation, and limiting agent permissions so that even a compromised prompt cannot cause major damage.

Is generative AI safe to use in healthcare and financial services?

Yes, but only with the right controls in place. Both industries have strict data regulations that AI pipelines must be designed around from day one. Protecto works with HIPAA-compliant AI deployments for healthcare and with banks operating under PDPL, GDPR, and SAMA frameworks. The key is ensuring data never leaves its required jurisdiction and is masked before it enters any AI layer.

How do I start identifying and mitigating the security risks of generative AI in my organization?

Start by mapping your AI data flows, then classify sensitive data at the source, enforce access at inference time, and build a complete audit trail. If you want a faster path, book a demo with Protecto to see how the platform fits into your existing AI stack in under a week.