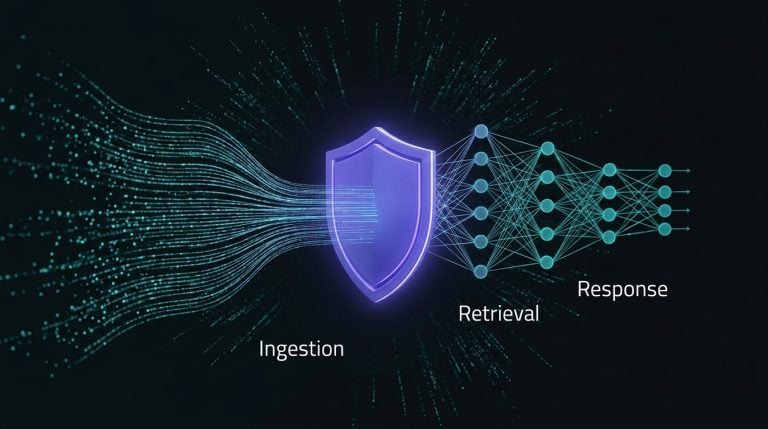

Striking the right balance between innovation using Artificial Intelligence (AI) and Large Language Models (LLMs) and data protection is essential. In this blog, we’ll explore critical strategies for ensuring AI and LLM data security, highlighting some trade-offs.

Anonymization and Pseudonymization: The Trade-Off Between Privacy and Accuracy

Anonymization and pseudonymization are fundamental techniques for protecting sensitive data. Organizations can reduce the risk of exposing personal information by transforming identifiable information into anonymized or pseudonymized data. However, the effectiveness of these techniques depends on selecting the appropriate data masking methods.

If not done correctly, data masking can significantly degrade the accuracy of LLMs. Therefore, it’s crucial to strike a balance, choosing masking techniques that protect privacy without compromising the LLM’s performance.

Implementing Security Guardrails

As LLMs become more sophisticated, so do the methods for circumventing their security protocols. Implementing robust security guardrails is essential to prevent “jailbreak” attempts—where users exploit the model to generate harmful or unauthorized content. These guardrails can include input validation, response filtering, and continuous monitoring.

However, the more aggressive and restrictive the security measures are, the greater the potential for hampering the model’s ability, especially coding and programming-related answers. Organizations must ensure that their security protocols are robust enough to prevent misuse while allowing AI to perform its intended functions effectively.

Granular Access Control: Safeguarding Access to Data

Ensuring that AI models and training data are accessed and utilized only by authorized personnel is vital for data security. Implementing robust, granular access controls is essential to prevent unauthorized access and mitigate the risk of data breaches.

However, this becomes particularly challenging in Generative AI solutions, where extensive context data is necessary for accurate results but also poses the risk of exposing unauthorized information.

Authorization frameworks in the Gen AI space are still maturing, leading companies to adopt complex controls or even restrict data usage to mitigate risks. These security measures can introduce friction, potentially slowing down workflows and hindering content access for legitimate users. Therefore, organizations must balance security and innovation by designing access controls that protect sensitive data while maintaining efficiency and usability for those with the appropriate access rights.

Restricting Certain Topics: Balancing Security and Functionality

One strategy to enhance data security is restricting certain topics within LLM responses. Organizations can minimize the risk of accidental data leaks or breaches by curbing discussions around sensitive or regulated topics. However, this approach comes with a significant trade-off.

Restricting topics can limit the LLM’s functionality and ability to serve diverse use cases. For example, barring discussions on specific topics in healthcare or legal applications could render AI less valuable or even counterproductive. It’s essential to evaluate which topics to restrict carefully, considering the potential impact on both security and functionality.

Conclusion: Navigating the Trade-Offs

In the pursuit of AI and LLM innovation, data security must never be an afterthought. The strategies outlined above—anonymization, topic restriction, PII filtering, security guardrails, and access control—are essential for protecting sensitive information. However, each comes with its own set of trade-offs, often pitting security against functionality.

The challenge for organizations is to navigate these trade-offs thoughtfully, implementing measures that protect data without stifling innovation. By carefully balancing these considerations, organizations can leverage the power of AI and LLMs while maintaining the trust and safety of their users.