The Hidden Risk in Every AI API Call

Every prompt sent to an LLM carries more than just intent. It often carries sensitive data. A customer support chatbot might include names and phone numbers. A healthcare AI assistant might process patient records. A fintech application could analyze transaction histories. In each case, Personally identifiable information (PII) is being passed to third-party AI APIs often without proper data protection.

The scale of the problem is growing fast. Over 53% of all data breaches involve PII, making it the most targeted data category today. At the same time, 40% of organizations report AI-related privacy incidents, highlighting how quickly this risk is escalating. The problem isn’t just security. It’s compliance, trust, and control.

At Protecto, one principle guides every AI system we help secure: Sensitive data should never leave your environment in its raw form. This guide walks through how to achieve that across OpenAI, Anthropic, and other leading LLM platforms.

Understanding the PII Risk in AI APIs

Traditional applications handle structured data with defined controls. AI systems don’t. Instead of that, they rely on free-text prompts, context pulled from documents (RAG pipelines), and dynamic responses generated at runtime. This creates a new reality. Sensitive data is embedded inside the original language and flows across multiple system layers often invisibly.

Where PII Leaks in AI Systems

PII doesn’t exist in just one place, It spreads across the entire AI pipeline:

| Layer | Risk |

| User Input | Names, emails, IDs entered in prompts |

| System Prompt | Hidden injection of customer or internal data |

| RAG Context | Documents containing sensitive information |

| Model Output | AI may regenerate or expose sensitive data |

| Logs & Monitoring | Data stored in third-party systems |

Types of PII, PHI & PCI:

Modern AI systems frequently process:

- Names and personal identifiers

- Email addresses and phone numbers

- Government IDs (SSN, Aadhaar, Passport)

- Credit card and bank account details

- IP addresses and device identifiers

- Physical addresses

- Medical records

- Patient IDs and insurance data

- Login credentials and session tokens

- Transaction and behavioral data

Protecto’s DeepSight engine detects over 200+ sensitive data types across 50+ languages with custom entities including industry-specific identifiers like Medical Record Numbers and IBAN codes that generic tools consistently miss. Without protection, all of this can be exposed in a single API call.

Why Traditional Approaches Fail

Many teams attempt to secure AI pipelines using legacy methods, but these approaches break down in AI environments.

Comparison: Traditional Methods vs AI Reality

| Approach | How It Works | Why It Fails in AI |

| Manual Redaction | Remove sensitive data manually | Not scalable, highly error-prone |

| Regex Matching | Detect patterns like SSN or email | Misses context and format variations |

| Static Masking | Replace values with placeholders | Breaks AI accuracy and contextual reasoning |

| Encryption | Protects stored data | Does not work during model inference |

The core problem is that traditional methods treat data protection as pattern matching. AI data is unstructured and contextual detection must be intelligent, not rule-based, and privacy must be enforced before data leaves the system. This is where most AI implementations fall short. Unlike static masking which replaces values with generic placeholders and destroys the contextual meaning the model needs, Protecto’s context-preserving tokenisation retains semantic structure so AI accuracy is never compromised.

The Protecto Solution:

Protecto introduces a new architectural layer for AI security: the AI Context Control Layer. Instead of sending raw data directly to LLMs, every request flows through a privacy layer, the first one that protects sensitive data before it ever reaches an external model.

How It Works

| Step | Description |

| Detect | Identify PII across prompts and context using Agentic Data Classification |

| Tokenize | Replace sensitive data with semantically meaningful secure tokens |

| Send | Forward the masked data to the LLM API, no raw PII crosses the boundary |

| Process | The AI generates a response using tokens, preserving full contextual accuracy |

| Restore | Original data is safely reinserted for authorized users in the final output |

Unlike redaction, Protecto preserves the context and meaning the LLM needs to reason effectively. The model understands it is working with a person’s name, a financial account, or a medical record, without ever seeing the actual values. AI accuracy is maintained. Sensitive data never leaves the organization unprotected.

Traditional Methods vs Protecto

| Capability | Traditional Approaches | Protecto |

| Context-aware detection | ❌ No | ✅ Yes |

| Works on unstructured data | ❌ Limited | ✅ Full |

| Preserves AI accuracy | ❌ No | ✅ Yes |

| Multi-model compatibility | ❌ Complex | ✅ Unified |

| Real-time protection | ❌ No | ✅ Yes |

| Audit-ready logs | ❌ No | ✅ Yes |

How Protecto Works Across AI Providers

One of the most significant operational challenges for enterprises is managing privacy consistently across multiple AI providers. Protecto solves this with a unified privacy layer that works across:

- OpenAI: GPT-4, GPT-4o, and other GPT models

- Anthropic: Claude Opus, Claude Sonnet, and Claude Haiku

- Google: Gemini models (Protecto is also available on the Google Cloud Marketplace for GCP-native deployments)

- Azure OpenAI: Enterprise-grade deployments

No matter which provider is in use, Protecto ensures consistent masking policies, unified governance, and centralized compliance oversight. Teams no longer need to build and maintain separate privacy implementations per provider.

Best Practices for PII Protection in AI Systems

- Protect data before it leaves the system: Raw PII should never be transmitted to external APIs. Protection must happen at the source, not downstream after the fact.

- Use context-aware tokenization, not redaction: Removing data degrades AI accuracy. Replacing it with semantically meaningful tokens preserves the model’s ability to reason while keeping actual values private. This is the distinction that makes Protecto’s approach work at production scale.

- Maintain full audit trails: Every interaction where sensitive data is processed should be logged with timestamps, entity types detected, and confirmation that masking was applied. This is the evidence layer that turns technical controls into compliance documentation, and what auditors look for first.

Compliance and Governance

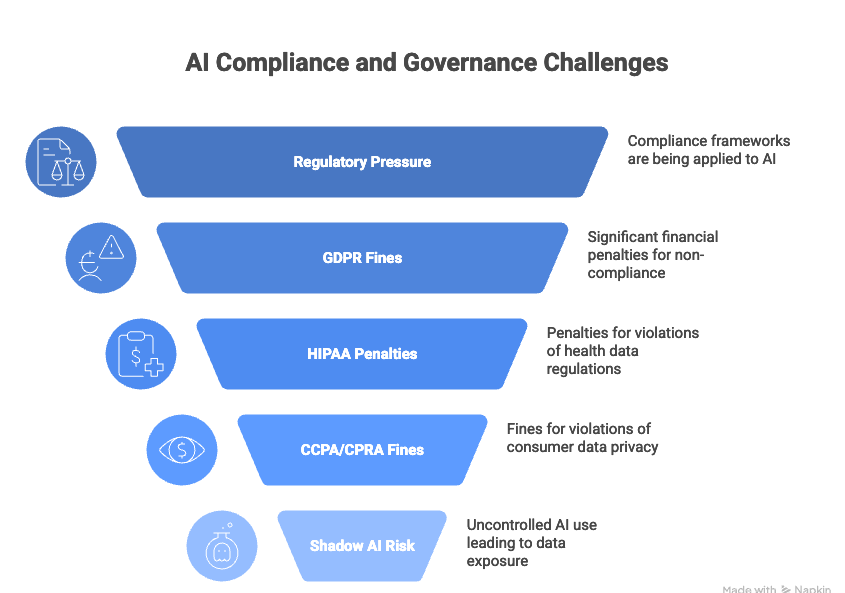

AI adoption is accelerating, and so is regulatory pressure. Compliance frameworks designed for traditional software are now being applied directly to AI systems, and the stakes are significant.

- GDPR: Fines of up to €20 million or 4% of global annual revenue (Article 83)

- HIPAA: Penalties of up to $1.5 million per violation category per year (HHS Civil Monetary Penalties)

- CCPA/CPRA: Fines of $2,500 per unintentional violation and $7,500 per intentional violation

- Shadow AI risk: According to Microsoft’s 2024 Work Trend Index, 78% of AI users are bringing their own AI tools to work without employer oversight, creating significant uncontrolled data exposure

How Protecto Addresses Compliance Requirements

| Compliance Need | Protecto Capability |

| GDPR | Masks personal data before any processing occurs |

| HIPAA | Protects PHI across all healthcare AI workflows |

| CCPA/CPRA | Ensures consumer data is never exposed to third-party models |

| SOC 2 | Provides full audit logs and real-time monitoring |

| PDPL / SAMA | Pre-built policies for Saudi |

| DPDP | Indian data protection laws |

Protecto transforms compliance from a bottleneck into an enabler making it possible to deploy AI quickly without creating new regulatory exposure.

Conclusion

AI APIs are powerful, but they come with hidden risks that most organizations have not fully addressed. Every prompt, every response, and every integration introduces potential exposure. The organizations building AI responsibly are the ones treating privacy as an architectural requirement not an afterthought.

Ready to protect your AI pipeline? Request a Demo | Try for Free | Read the Docs